UAVSAR (Unmanned Aerial Vehicle Synthetic Aperture Radar)

UAVSAR (Unmanned Aerial Vehicle Synthetic Aperture Radar)

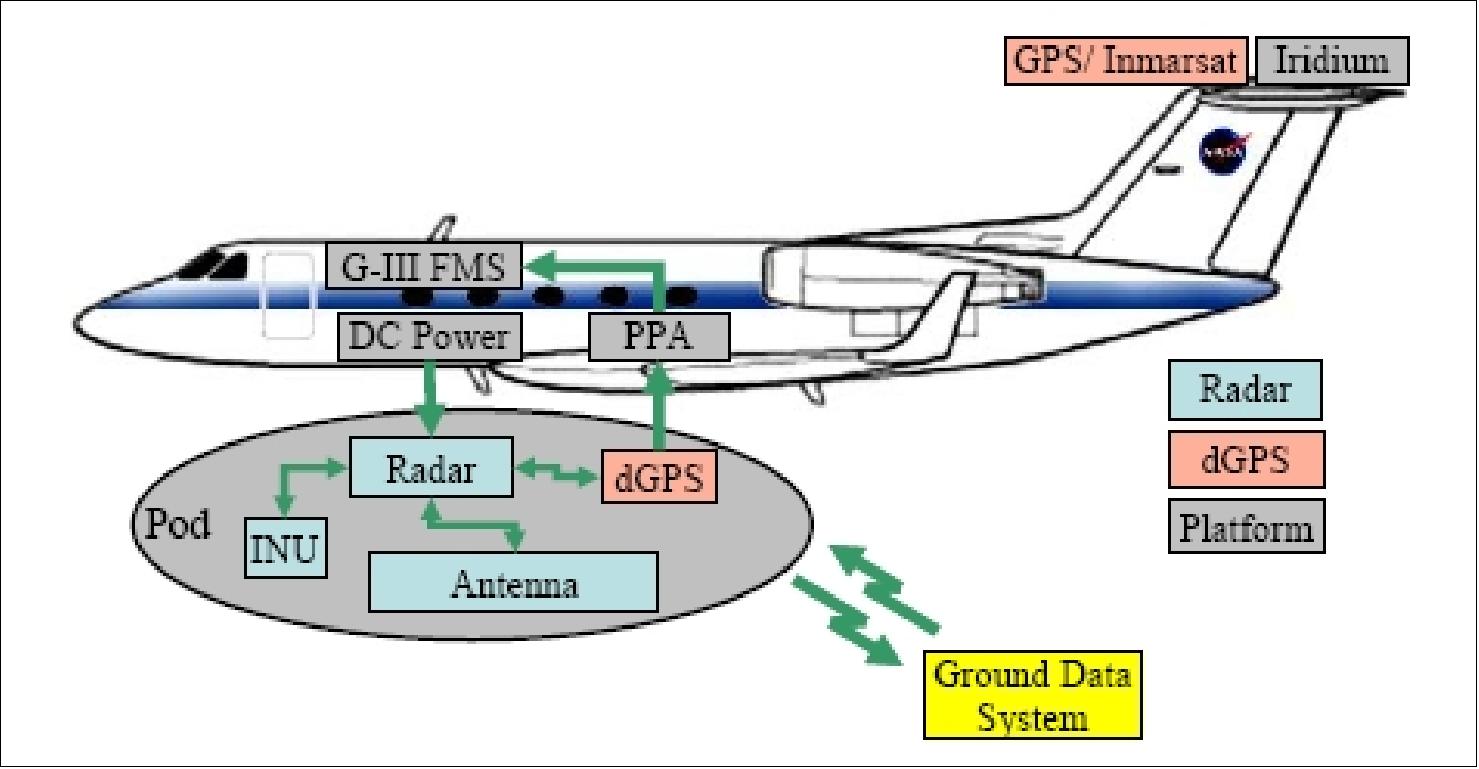

UAVSAR is a NASA L-band SAR (Synthetic Aperture) compact pod-mounted polarimetric instrument for interferometric repeat-track observations that is being developed at JPL and at the NASA/DFRC (Dryden Flight Research Center) in Edwards, CA. The radar will be designed to be operable on a UAV (Unpiloted Aerial Vehicle) but will initially be demonstrated on a on a NASA Gulfstream III aircraft (C-20A/G-III). The system will nominally operate at altitudes of ~ 13,800 m. The program was initiated in the timeframe 2003/4 as an Instrument Incubator Project (IIP) funded by ESTO (Earth Science and Technology Office) of NASA. 1) 2) 3) 4) 5) 6)

The primary objective of the side-looking UAVSAR instrument is to accurately map crustal deformations associated with natural hazards, such as volcanoes and earthquakes. Topographic information is derived from phase measurements that, in turn, are obtained from two or more passes over a given target region. The frequency of operation, approximately 1.26 GHz, results in radar images that are well-correlated from pass to pass. Polarization agility facilitates terrain and land-use classification.

The design of the UAVSAR focuses on two key challenges:

• First, repeat pass measurements need to be taken from flight paths that are nearly identical. This instrument utilizes real-time GPS that interfaces with the platform flight management system (FMS) to confine the repeat flight path to within a 10 m tube over a 200 km course in conditions of calm to light turbulence. The FMS is also referred to as the PPA (Platform Precision Autopilot).

• Secondly, the radar vector from the aircraft to the ground target area must be similar from pass to pass. This is accomplished with an actively scanned antenna designed to support electronic steering of the antenna beam with a minimum of 1º increments over a range to exceed ±15º in the flight direction.

The UAVSAR radar is designed from the beginning as a miniaturized polarimetric L-band radar for repeat-pass and single-pass interferometry with options for along-track interferometry and additional frequencies of operation. By designing the radar to be housed in an external unpressurized pod, it has the potential to be readily ported to other platforms such as the Predator or Global Hawk UAVs. Initial testing is being carried out with the NASA Gulfstream III aircraft, which has been modified to accommodate the radar pod and has been equipped with precision autopilot capability developed by NASA Dryden Flight Research Center.

The UAVSAR project will also serve as a technology test bed. As a modular instrument with numerous plug-and-play components, it will be possible to test new technologies for airborne and spaceborne applications.

Status of the UAVSAR implementation and campaign services

• December 13, 2021: NASA and NOAA (National Oceanic and Atmospheric Administration) scientists are teaming up to test remote sensing technology for use in oil spill response. 7)

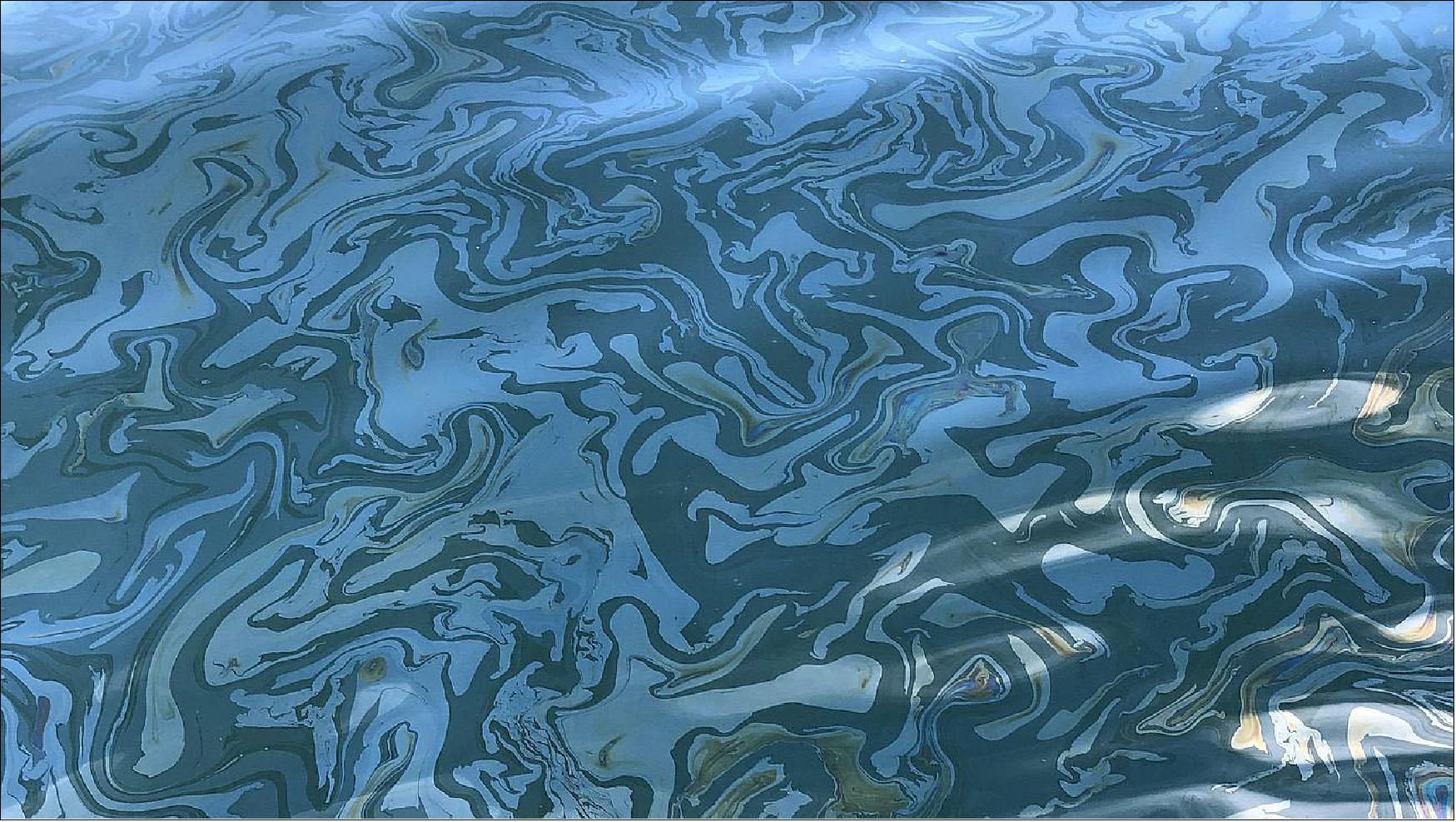

- Just off the coast of Santa Barbara, California, thousands of gallons of oil seep through cracks in the seafloor and rise to the surface each day. But this isn’t a disaster zone: It’s one of the largest naturally occurring oil seeps in the world and is believed to have been active for thousands of years.

- The reliability of these seeps makes the area an important natural laboratory for scientists, including those with the Marine Oil Spill Thickness (MOST) project, a collaboration between NASA and the National Oceanic and Atmospheric Administration (NOAA) to generate operational automated oil spill detection, oil extent geospatial mapping analytics, and oil thickness characterization applications.

- The MOST team is working to develop a way for NOAA – the lead federal agency for detecting and tracking coastal oil spills – to use remote sensing data to determine not just where oil is, but where the thickest parts are, one of the critical missing pieces to direct response and remediation activities. The team recently concluded a fall field campaign in Santa Barbara.

- “We’re using a radar instrument called UAVSAR to characterize the thickness of the oil within an oil slick,” said Cathleen Jones, MOST co-investigator at NASA’s Jet Propulsion Laboratory in Southern California. “This thicker oil stays in the environment longer and damages marine life more than thin oil. And if you know where it is, you can direct responders to those problematic areas.”

- NASA's UAVSAR (Uninhabited Aerial Vehicle Synthetic Aperture Radar) attaches to the fuselage of an airplane that collects a roughly 12-mile-wide (19-kilometer-wide) image of an area.

- But SAR images are unlike those acquired from other sensors. The instrument sends radar pulses down to the surface of the ocean, and the signals that bounce back are used to detect roughness, caused by waves, at the ocean’s surface. When oil is present, it dampens the waves, creating areas of smoother water. These smooth, oily areas appear darker than the surrounding clean water in the SAR imagery – the thicker the oil, the darker the area will appear.

- The airborne observations must then be validated, meaning the scientists have to go to the same area on a boat to measure the thickness of the oil by hand.

- “We put the sampler, which is like a tube that’s open on both ends, in the water and let it sit there for a moment,” said Ben Holt, also a JPL co-investigator for MOST. “And then when you close off the tube, a small layer of oil and water is collected. After the oil layer settles, you can measure the oil layer thickness and compare that with the UAVSAR observations to see how closely they match up.”

- As another key layer of validation, the ship deploys a drone carrying an optical sensor, which is capable of observing the slick and measuring its thickness over a broader area than can be observed from the ship.

How MOST Was Born

- Initially, UAVSAR seemed an unlikely candidate to track or characterize oil. It was developed to measure changes to Earth’s surface – for instance, after an earthquake or volcanic eruption. But during the 2010 Deepwater Horizon oil spill in the Gulf of Mexico, Elijah Ramsey, a scientist with the U.S. Geological Survey, reached out to Jones about trying to use the instrument to identify the oil coming ashore in Louisiana.

- “The indications were that it wouldn’t work because the instrument uses too long of a wavelength for that purpose,” Jones said. “But we said, ‘Let’s try it anyway.’”

- She and Holt were glad they did.

- “It was just incredible what you could see with UAVSAR because it is so much more sensitive than satellite-based instruments,” Jones said. UAVSAR is more sensitive to low returns from oil covered areas than typical satellite SAR instruments. So we were able to identify the oil and to calculate the oil concentrations present.”

- Their findings were a proof of concept and were published in 2012. In subsequent years, the feasibility of scaling this innovation for further risk analysis and assessment has been examined.

- In 2018, Frank Monaldo, a scientist at the University of Maryland who had worked with NOAA for many years, partnered with Jones, Holt, and a team from NOAA, the U.S. Coast Guard, and the private sector, in addition to researchers in Canada and Norway, to formulate the MOST proposal. In 2019, NASA’s Disasters program selected this concept for implementation to reduce disaster risk and strengthen resilience, and the four-year MOST project was launched.

Unexpected, Real-World Deployment

- As the MOST team was preparing to head out for their fall field campaign, scheduled to kick off the first Monday in October, authorities were responding to reports of an oil spill off the coast of Huntington Beach, California – just 130 miles (209 km) south of the Santa Barbara field campaign location.

- Several members of the MOST team quickly became involved with providing data on the spill. What was supposed to have been a practice campaign in controlled circumstances quickly became a real-world test of UAVSAR’s utility during an actual oil spill emergency.

- “It was really different from doing a practice run because people were overwhelmed running the response,” Jones said. “But when NOAA got the UAVSAR data, they used it to delineate oil, and then they released a Marine Pollution Surveillance Report based on it. It was the first time that had ever been done using data from an airborne instrument,” she said.

- While UAVSAR proved valuable in this situation, the deployment couldn’t replace the field campaign for scientific purposes, because they were unable to take measurements by boat. “We really had no in-situ measurements for comparison,” said Holt. “The real value was the efforts by Cathleen and other members of the UAVSAR team to get the UAVSAR data processed and uploaded and then utilized by NOAA.”

- The fall field campaign took place several weeks later in Santa Barbara.

What’s Next?

- Although UAVSAR’s capabilities in oil spill thickness detection are useful, flying an airplane over every oil slick isn’t practical. So once the data from the spring and fall field campaigns is validated, it’ll be used to train algorithms to calculate oil thickness from SAR data automatically.

- UAVSAR is a prototype for an upcoming satellite mission called NASA-ISRO Synthetic Aperture Radar, or NISAR, which is a partnership between NASA and the Indian Space Research Organisation (ISRO). If all goes according to plan, the methods and algorithms developed during the MOST project can be applied to data from the new mission, as well.

- “The idea here is that in two years or so when the MOST project is over, we’ll have a prototype system for detecting oil thickness that NOAA can use and distribute during oil spill response,” said Jones. “With NASA partnering with NOAA, we can transfer this information to those who can use it practically.”

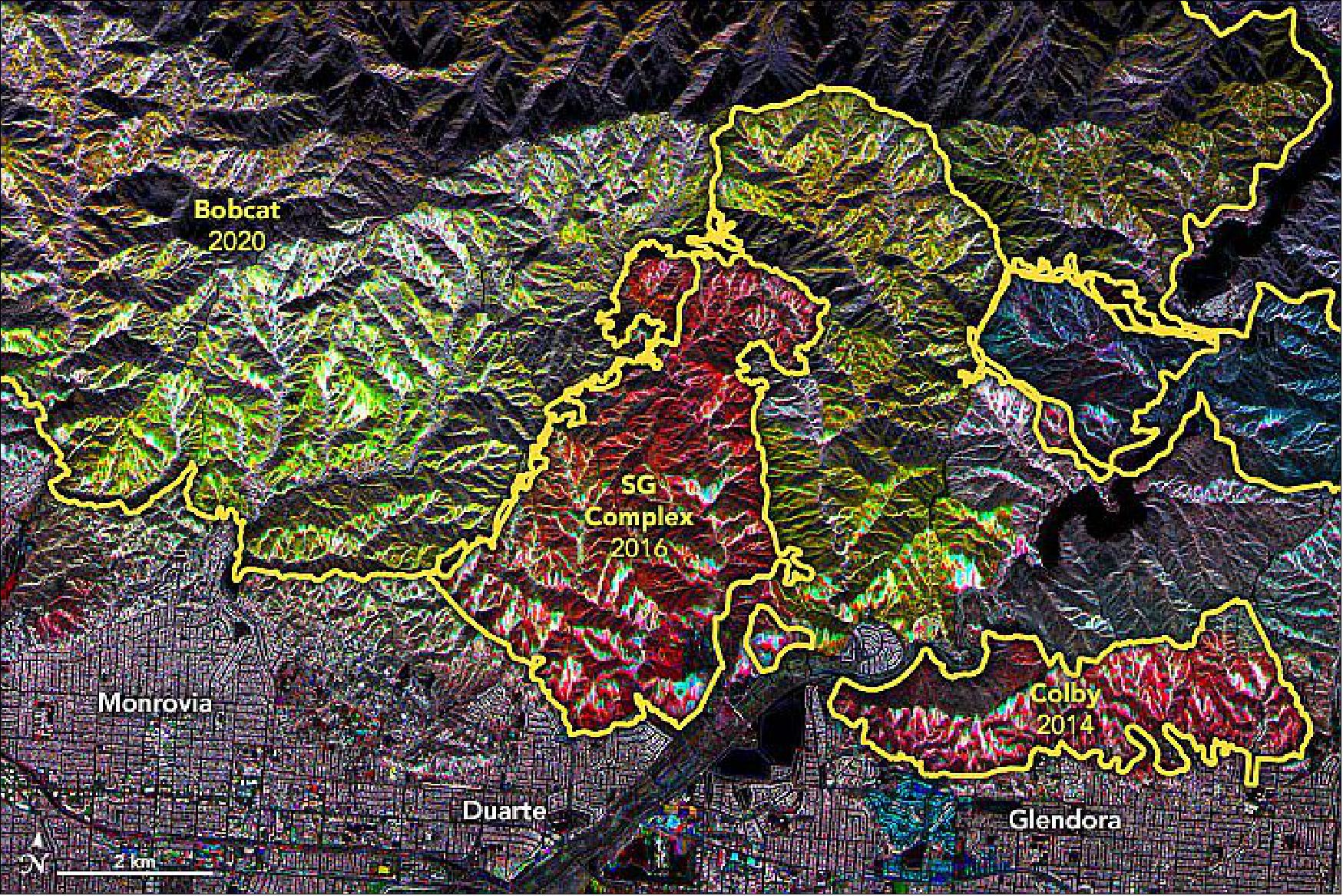

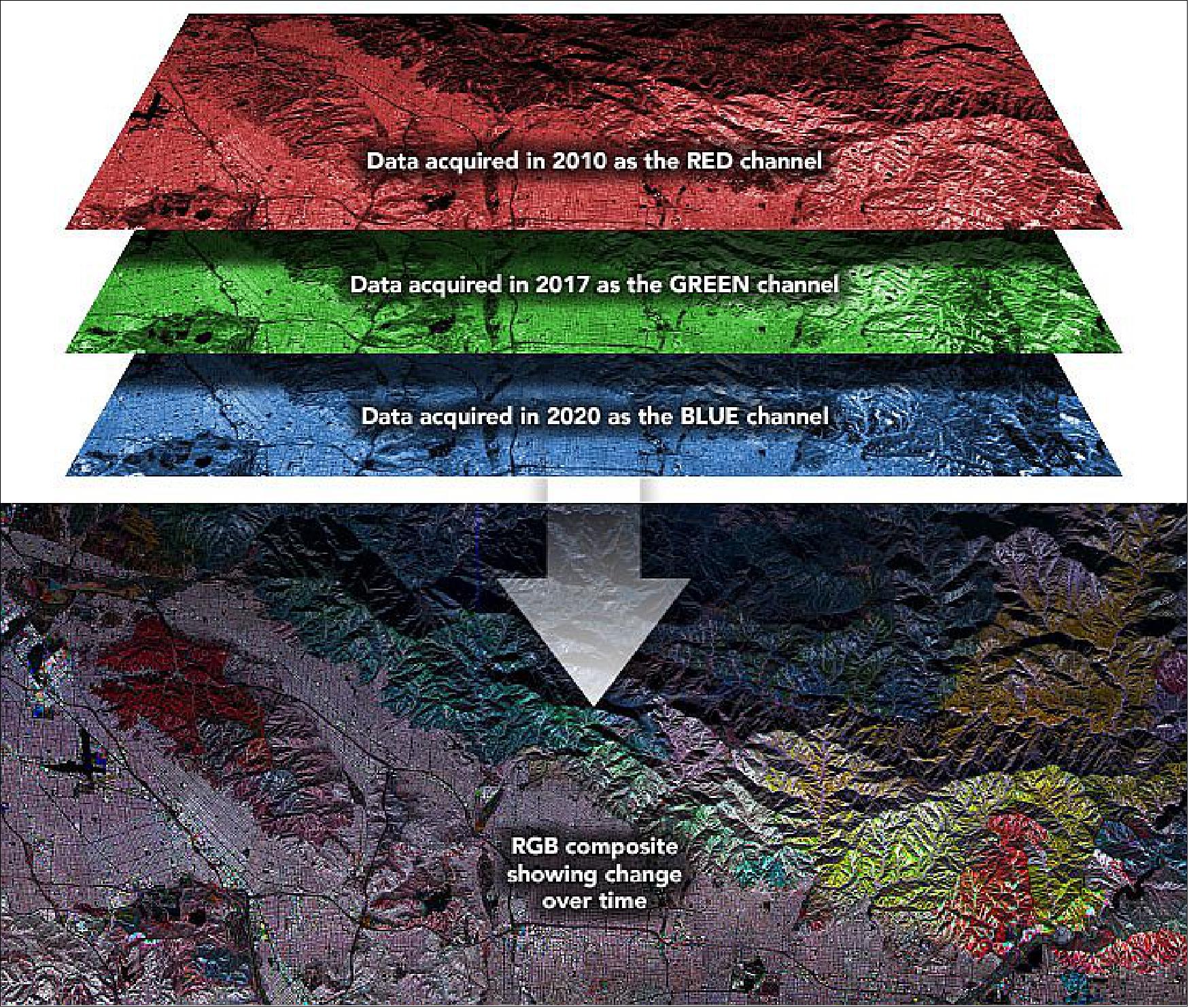

• February 6, 2021: For the past few decades, scientists have been using satellite- and airplane-based radar instruments to detect damage caused by wildfires and human-caused blazes. Radar instruments can observe by day or night and can see land through clouds and smoke, so they are helpful for observing fire fronts and burn scars during and shortly after fire ravages a landscape. 8)

- Landscape ecologist Naiara Pinto and colleagues at NASA’s Jet Propulsion Laboratory are now taking a longer view. They are trying to decipher where and how well forests and scrublands are recovering in the years after a fire.

- Synthetic aperture radar (SAR) instruments send out pulses of microwaves that bounce off of Earth’s surfaces. The reflected waves are detected and recorded by the instrument and can help map the shape of the land surface (topography) and the land cover—from cities to ice to forests. By comparing changes in the signals between two separate satellite or airplane overpasses, scientists can observe surface changes like land deformation after earthquakes, the extent of flooding, or the exposure of denuded or bare ground after large fires.

- SAR instruments are carried on the European Space Agency's Sentinel-1 satellites, while NASA currently deploys its Uninhabited Aerial Vehicle Synthetic Aperture Radar (UAVSAR) via research aircraft. NASA and the Indian Space Research Organization are planning to launch the NISAR satellite in 2022.

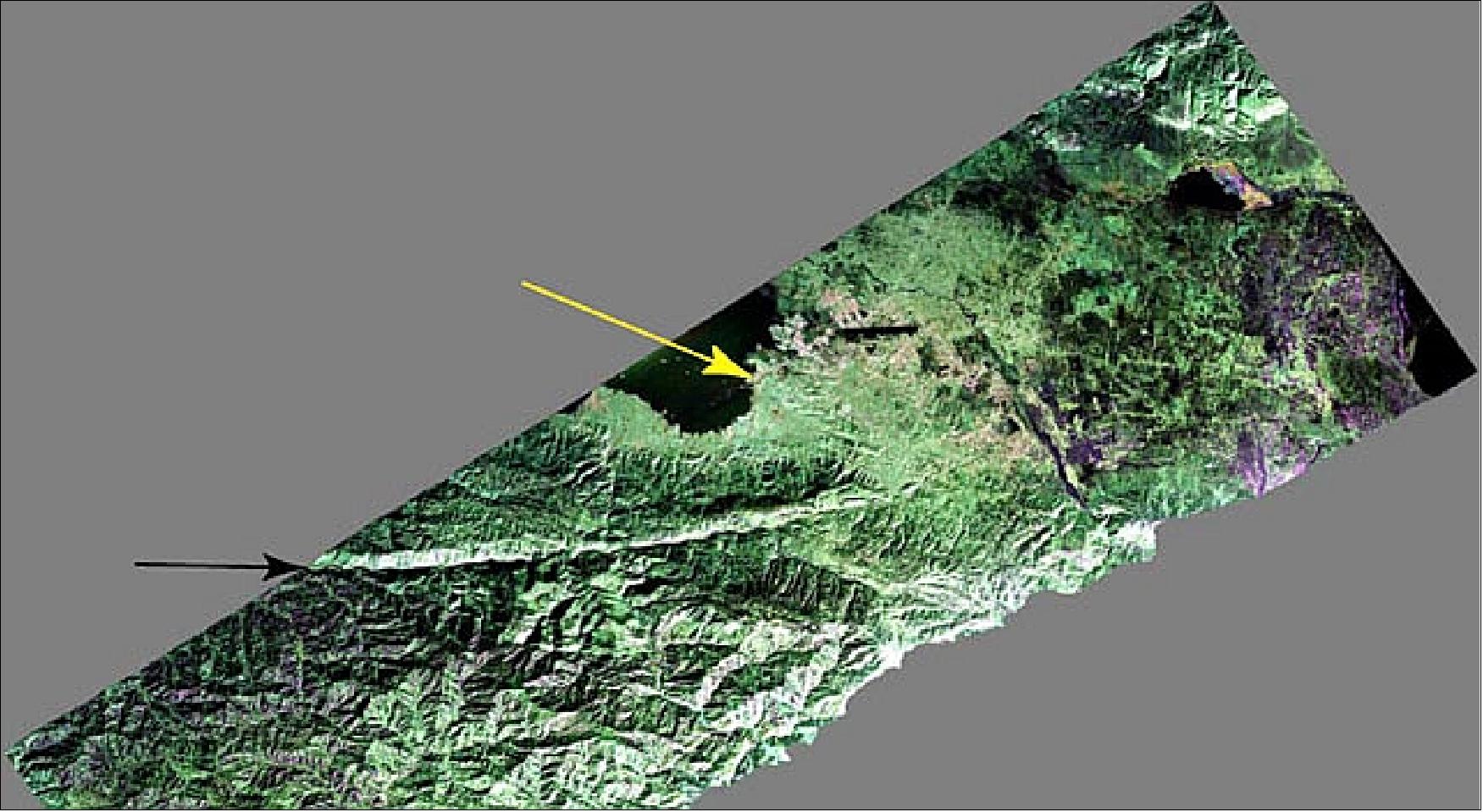

![Figure 4: Scientists are using radar data to decipher where and how well landscapes recover in the years after major fires. Mounted on the bottom of NASA research planes, UAVSAR has been flown over the same portions of Southern California several times since 2009. Pinto and JPL colleagues Latha Baskaran, Yunling Lou, and David Schimel analyzed that data and developed a mapping technique to show the different stages of removal and regrowth of vegetation (chapparal and forest). [image credit: NASA Earth Observatory images by Joshua Stevens, using UAVSAR data and imagery courtesy of Anne Marie Peacock, Naiara Pinto, and Yunling Lou and NASA/Caltech UAVSAR. Story by Michael Carlowicz]](https://eoportal.org/ftp/airborne-sensors/UAVSAR_171221/UAVSAR_Auto17.jpeg)

- “Overall, the colors are telling us that the Angeles National Forest contains a patchwork of plant communities at different stages of regeneration,” said Pinto, who is a science coordinator for UAVSAR. For instance, areas with more red had more vegetation in 2010 than they do now. Areas with more blue and green shading had more vegetation (regrowth) in recent years. Yellow indicates areas burned in 2020 that had a higher volume of vegetation in 2010 and 2017 (red+green) but lower volume in 2020 (blue).

- The project has been supported by NASA’s Earth Applied Sciences Disasters program, which generates maps and other data products for institutional partners as they work to mitigate and recover from natural hazards and disasters. The SAR technique is still being tested and validated, but the intent is to monitor forest regrowth and fire scar change over time, which are important information for forest and fire managers working to manage risks.

• September 15. 2020: While the agency's satellites image the wildfires from space, scientists are flying over burn areas, using smoke-penetrating technology to better understand the damage. 9)

- A NASA aircraft equipped with a powerful radar took to the skies this month, beginning a science campaign to learn more about several wildfires that have scorched vast areas of California. The flights are being used to identify structures damaged in the fires while also mapping burn areas that may be at future risk of landslides and debris flows.

- They're part of the ongoing effort by NASA's Applied Sciences Disaster Program in the Earth Sciences Division, which utilizes NASA airborne and satellite instruments to generate maps and other data products that partner agencies on the ground can utilize to track fire hotspots, map the extent of the burn areas, and even measure the height of smoke plumes that have drifted over California and neighboring states.

- Equipped with the Uninhabited Air Vehicle Synthetic Aperture Radar (UAVSAR) instrument, the C-20A jet began flights from NASA's Armstrong Flight Research Center near Palmdale, California, on Sept. 3. This first flight surveyed the LNU Lightning Complex burn area northeast of San Francisco. A Sept. 9 flight focused on fires south of Monterey in Central California.

- Several of the areas have been systematically imaged by UAVSAR approximately every year beginning in 2009, with the two most recent data collections being in 2018 and 2019 as part of larger earthquake-fault monitoring studies. When images from those previous overflights are combined with the new images, the science team can produce what are called damage proxy maps to identify the areas most affected by the fires and plot the location of structures that may have burned.

- After vegetation is burned away, hillsides and valleys can become susceptible to landslides and debris flows during seasonal rains, often months later. By identifying the areas most at risk, scientists can better understand where such hazards may be greatest when the much-needed rains begin in California later this fall.

- The UAVSAR radar pod is mounted to the bottom of the aircraft and is flown repeatedly over an area to measure tiny changes (a few millimeters, or quarter inch) in surface height with extreme accuracy. The smoke-penetrating instrument is also highly effective at mapping burn scars because radar signals bounce off vegetation in a very different way than they do off freshly burned ground.

- What's more, UAVSAR airborne flights over burn areas produce observations that are 10 times higher in spatial resolution than satellites, and flights can be quickly arranged to collect data over vulnerable areas identified in satellite images.

- "UAVSAR has proven to be an invaluable tool to detect tiny changes in the height of the land," said Yunling Lou, UAVSAR project manager at JPL. "But this radar can also make exquisite measurements of burn scars on any given day and provide daily repeated measurements if needed, which complements mapping efforts by NASA satellites."

- Accompanying the radar on the next set of UAVSAR wildfire flights will be an infrared imager - an instrument that can see through dense smoke and identify active fires - and a visible camera, which are both part of the QUAKES-I (Quantifying Uncertainty and Kinematics of Earth Systems Imager) imaging suite. Scientists will be able to harness the data to generate detailed ground elevation maps in the fire burn areas.

- "We want to use a combination of radar, infrared, and visible imagery to understand where the wildfire is currently active, to map the burn area, and to understand what areas may have an elevated susceptibility of future landslides or debris flows," said Andrea Donnellan, a principal research scientist at JPL.

- These mark the first mapping flights with UAVSAR to support NASA's Disaster Program's data products as California continues to battle some of its worst wildfire seasons on record. These data products are prepared for agencies working on the ground in California, including the California National Guard, California Department of Forestry and Fire Protection (Cal Fire), Governor's Office of Emergency Services, California Geological Survey, and Federal Emergency Management Agency.

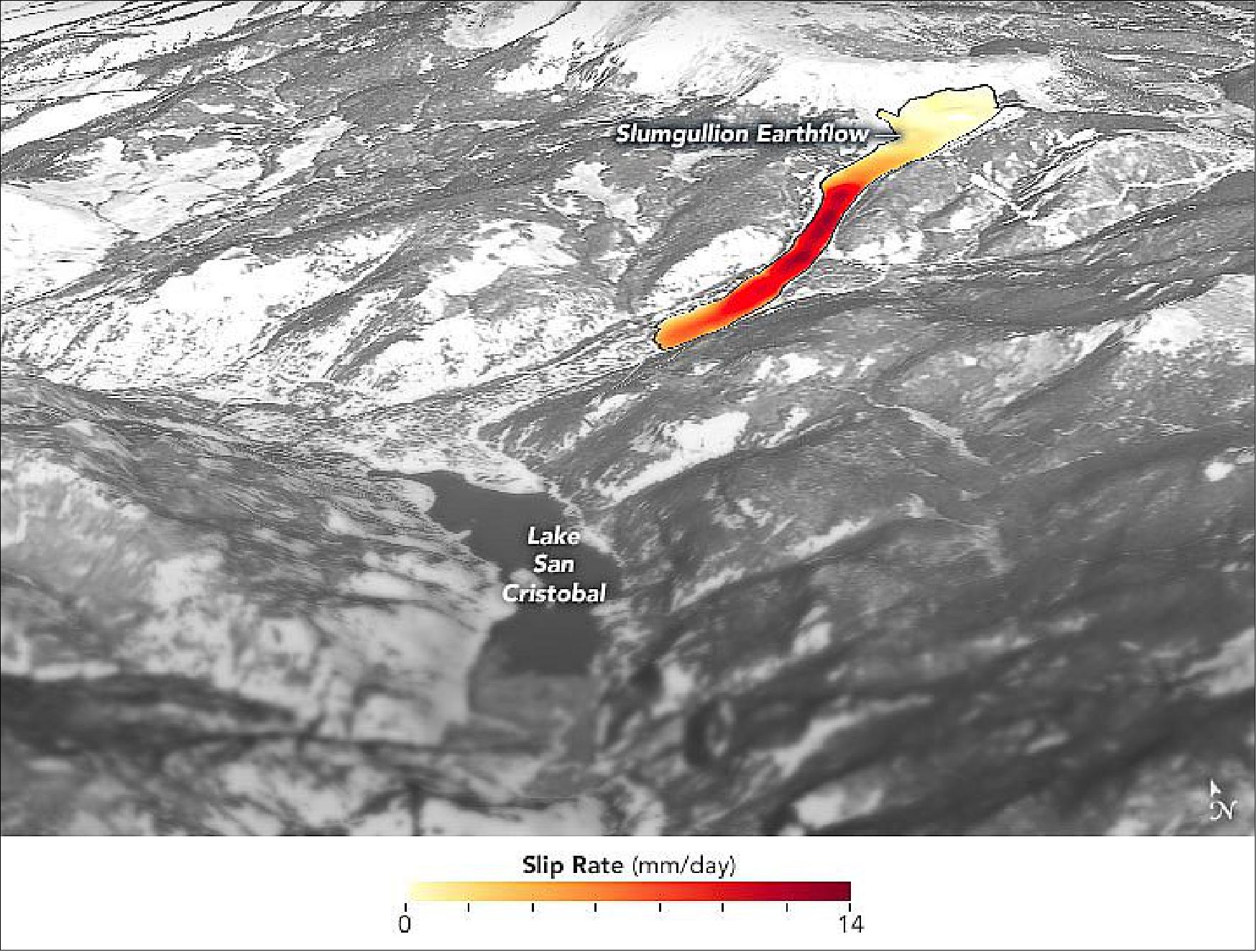

• June 5, 2020: Slow-moving landslides—places where the land creeps sluggishly downhill over long periods of time—are relatively stable until they aren’t. But because landslides are often inaccessible and do not respond uniformly to changes, they can be difficult to predict. 10)

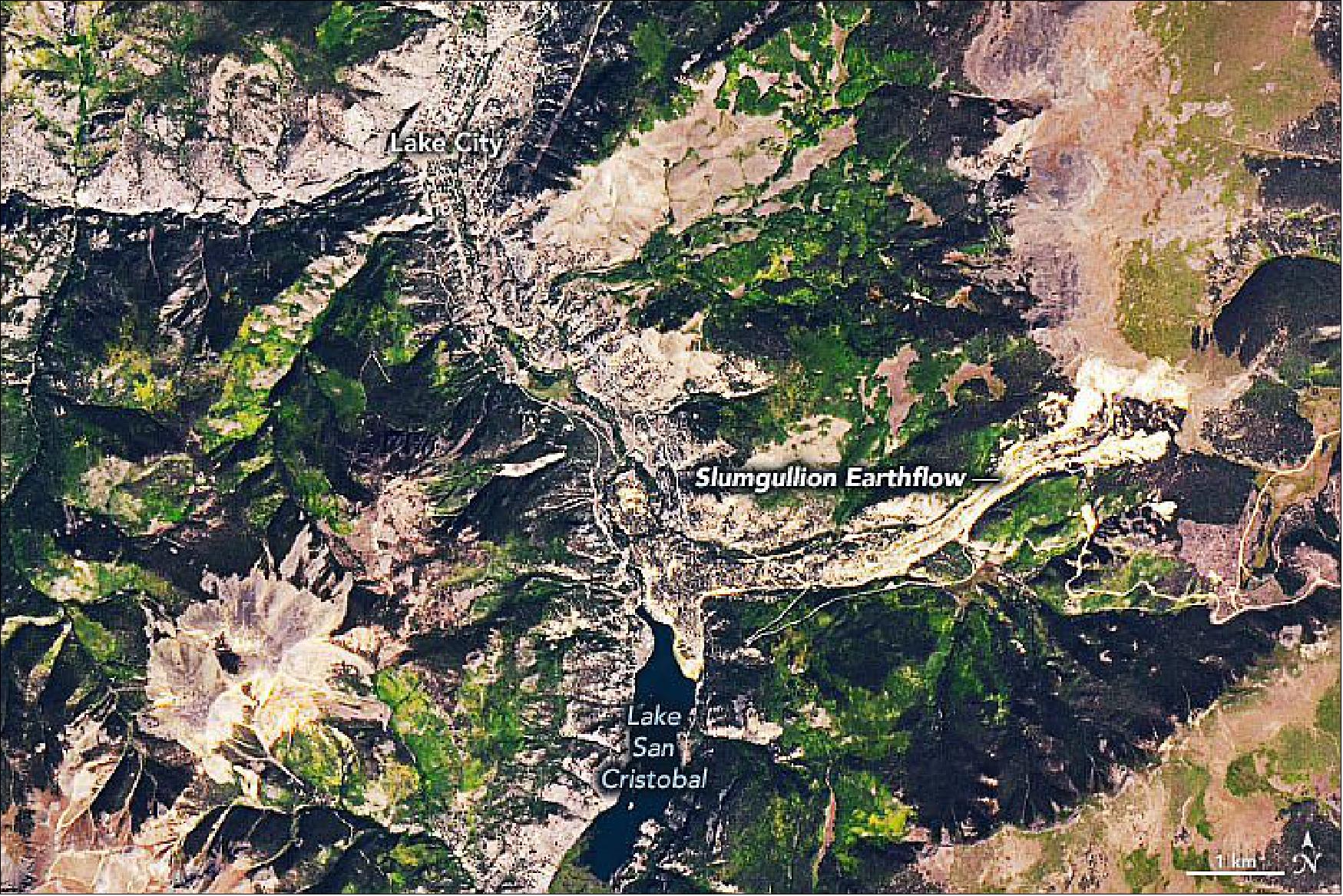

- Through the study of an unusual, long-lasting slide, a research team from the University of California, Berkeley, NASA’s Jet Propulsion Laboratory (JPL), and the U.S. Geological Survey (USGS) has developed a new technique to make prediction both easier and more accurate. The team centered their research on the 4-kilometer (2.5-mile) long Slumgullion landslide in southwestern Colorado. In motion for more than a century, the landslide provides an ideal natural laboratory for studying the dynamics of slow-moving landslides.

- “It’s important to understand how landslides work and how they respond to environmental changes so that we can better predict when they might transition from this gradual motion to a more rapid, catastrophic failure,” said JPL scientist Eric Fielding, a coauthor of the new study. When landslides become unstable due to heavy rain, snowmelt, earthquakes, or volcanic activity, they can quickly turn destructive, especially in populated areas.

- “By combining multiple datasets from the subsurface, ground surface, air, and space, we constructed a mechanical framework to quantify different features and movements of the landslide,” said lead author Xie Hu of UC Berkeley. “High-resolution synthetic aperture radar data from JPL’s airborne UAVSAR instrument was particularly important in developing this framework.” 11)

- Attached to the bottom of an airplane, UAVSAR collects radar images of Earth’s surface that can be used to map changes to the land surface (deformation) with centimeter-scale precision. When the instrument is flown over the same area multiple times, scientists can then glean how much the land has moved and in which direction. Because the instrument is attached to a plane, they can design flight plans to make multiple passes over precisely the same area in a short amount of time.

- “By flying over this landslide multiple times, in different directions at perpendicular angles, we had sufficient data to reconstruct the full three-dimensional motion in very clear detail, as well as how it varies over the years,” said Fielding.

- According to coauthor Bill Schulz, most of the action happens at the bottom of the landslide, with everything sliding on a thin shear zone that may be only a few centimeters thick. Because the depth can vary greatly across a single landslide, different parts of the slide will respond to changes in pressure at different times.

- “Groundwater pressure—from rain and snowmelt, for example—changes first right at the ground surface and last at the bottom of a landslide,” said Schulz. “So if one area of a landslide is half as deep as another, the area that is half as deep will respond first to the change in pressure.” UAVSAR does not directly measure deformation at depth, but the researchers were able to integrate the radar data with field measurements to model the various depths and movements of the slide.

- The study provided insights specific to the Slumgullion slide. “We found that the central part of this landslide moves quickly—about an inch per day, every day. But in observing all of the data over time, we see that the top and bottom parts are moving, too, just far more slowly,” said Fielding. “We also found that the uppermost part of the landslide responds most quickly to spring snowmelt, and that the central part responds significantly to annual variation: drought years versus wet years.”

• February 8, 2017: The Louisiana coastline is sinking under the Gulf of Mexico at the rate of about one football field of land every hour (about 18 square miles of land lost in a year). But within this sinking region, two river deltas are growing. The Atchafalaya River and its diversion channel, Wax Lake Outlet, are gaining about one football field of new land every 11 and 8 hours, respectively (1.5 and 2 square miles per year). Last fall, a team from NASA/JPL (Jet Propulsion Laboratory) in Pasadena, California, showed that radar, lidar and spectral instruments mounted on aircraft can be used to study the growing deltas, collecting data that can help scientists better understand how coastal wetlands will respond to global sea level rise. 12)

- The basics of delta building are understood, but many questions remain about how specific characteristics, such as vegetation types, tides, currents and the shape of the riverbed, affect a delta's growth or demise. That's partly because it's hard to do research in a swamp. "These factors are usually studied using boats and instruments that have to be transported through marshy and difficult terrain," said Christine Rains of JPL, an assistant flight coordinator for the program. "This campaign was designed to show that wetlands can also be measured with airborne remote sensing over a large area."

- JPL researchers fly over the Louisiana coastline at least once a year to keep track of subsidence (sinking) and changes in levees. The most recent airborne flights, however, focused on the growing deltas — specifically, flowing water and vegetation.

- JPL's Marc Simard, principal investigator for the campaign, explained that on a delta, water flows in every direction, including uphill. "Water flows not only through the main channels of the rivers but also through the marshes," he explained. "There is also the incoming tide, which pushes water back uphill. The tide enhances the flow of water out of the main channels into the marshes."

- When the tide goes out, water drains from the marshes, carrying sediment and carbon. The JPL instruments took measurements during both rising and falling tides to capture these flows. They also made the first complete measurement of the slope of the water surface and topography of the river bottom for both rivers from their origin at the Mississippi River to the ocean — necessary information for understanding the rivers' flow speeds.

- Some types of marsh vegetation resist flowing water better than others, as the new measurements have documented. Simard said, "We were really surprised and impressed by how the water level changes within the marshes. In some places, the water changes by 10 cm in an hour or two. In others, it's only 3 or 4 cm. You can see amazing patterns in the remote sensing measurements."

- Three JPL airborne instruments, flying on three planes, were needed to observe the flows and the movement of carbon with the water. The team measured rising and falling water in vegetated areas using the UAVSAR (Uninhabited Aerial Vehicle Synthetic Aperture Radar) instrument in October 2016. They measured the same changes in open water with the ASO (Airborne Snow Observatory ) lidar. The AVIRIS-NG (Airborne Visible/Infrared Imaging Spectrometer-Next Generation) was used to estimate the sediment, carbon and nitrogen concentrations in the water.

- Now that the team has demonstrated that these airborne instruments can make precise and detailed measurements in this difficult environment, the researchers plan to use the new data to improve models of how water flows through marshes. Scientists use these models to study how coastal marshes will cope with rising sea levels. With so many measurements available as a reality check, Simard said, "Our models will have to catch up with the observations now."

- In this context, view the growth of the Atchafalaya River and its diversion channel, the Wax Lake Outlet - satellite imagery over the last 30 years - presented in the Landsat-7 file at the entry: ”October 12, 2015” under Mission status.

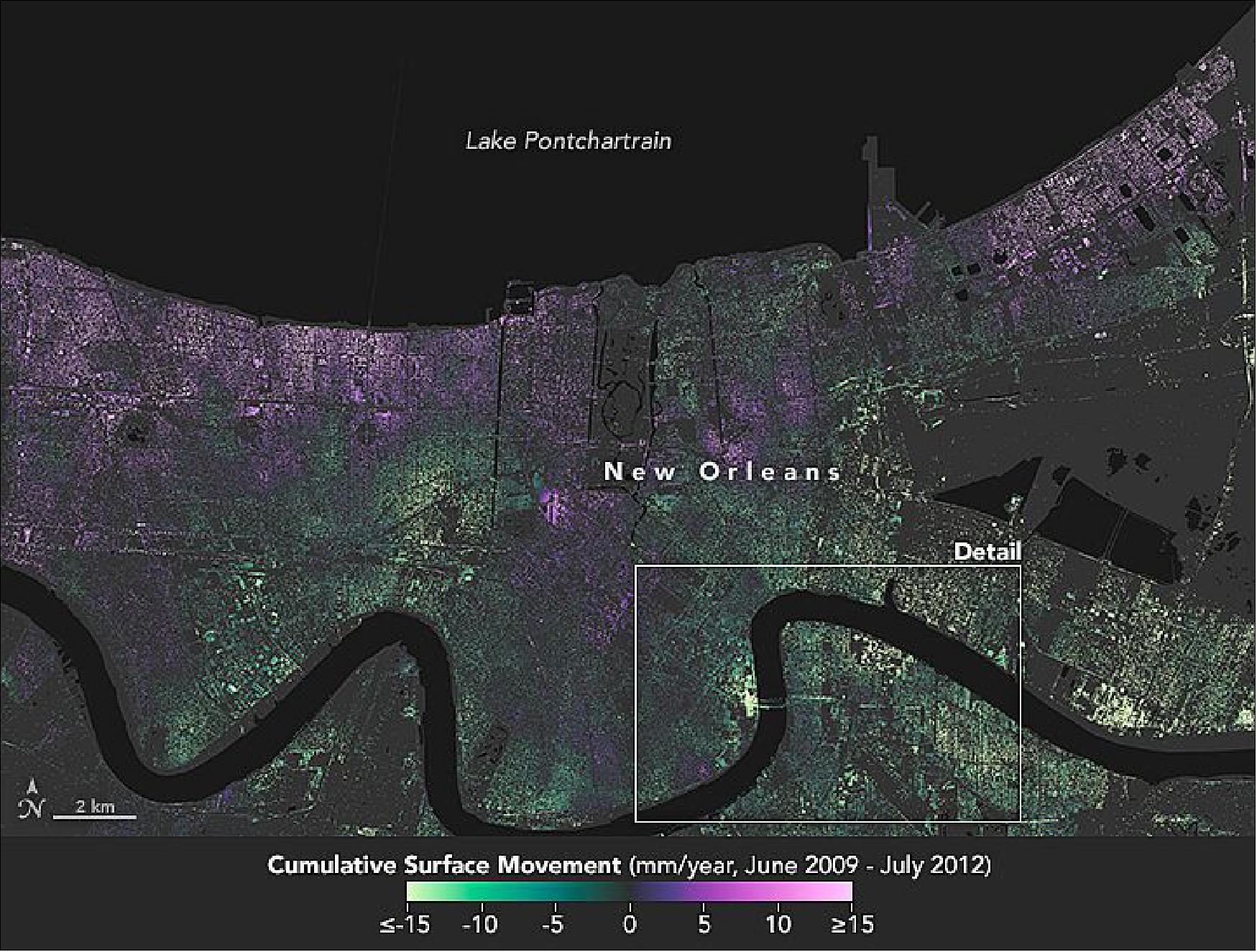

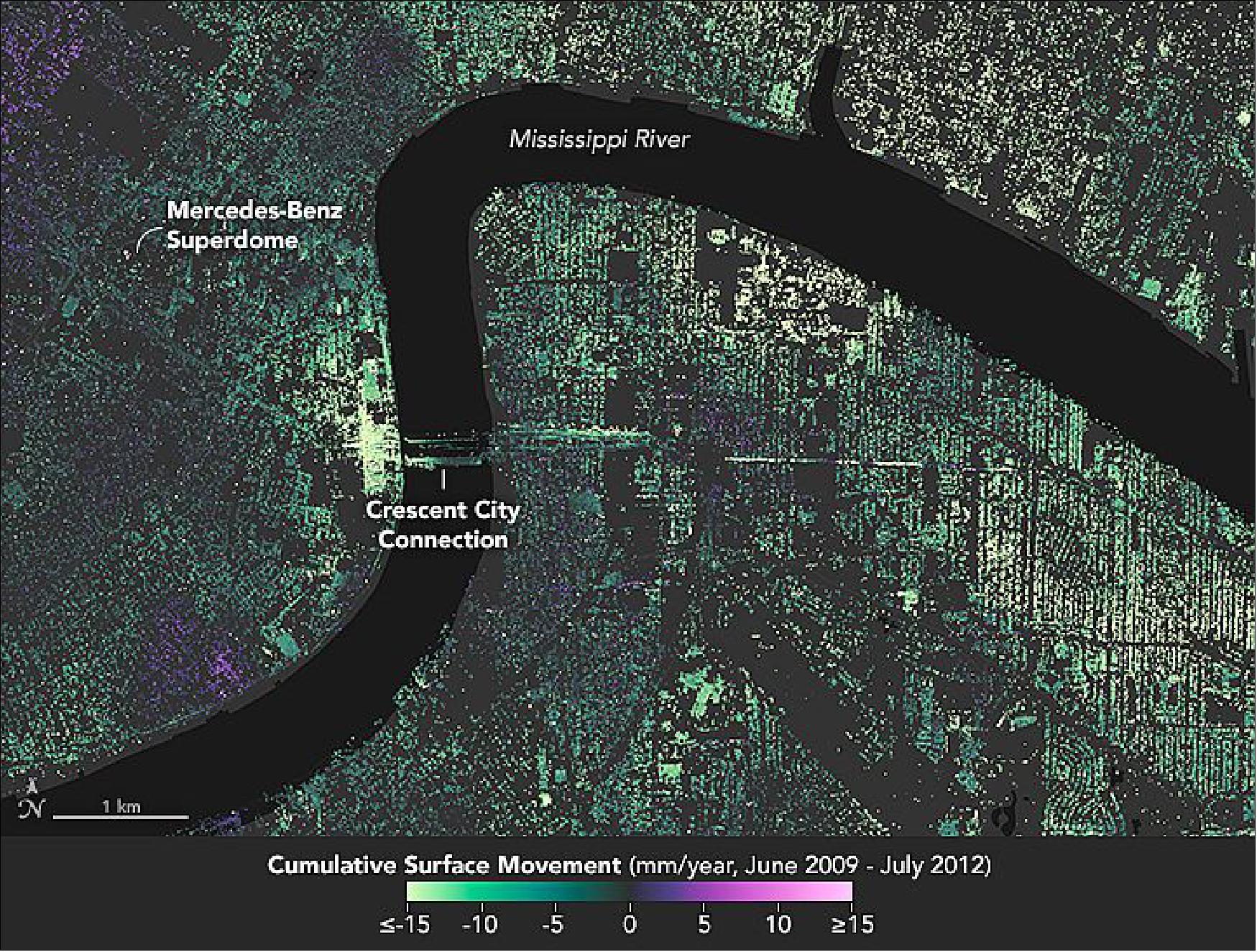

• May 25, 2016: New Orleans and its surrounding areas continue to sink at highly variable rates due to a combination of natural geologic processes and human activity, according to a new study using NASA airborne radar (UAVSAR). The observed rates of sinking, also known as subsidence, were generally consistent with, but somewhat higher than, previous studies conducted with radar data. 13) 14)

- The maps of Figures 12 and 13 show how much the ground in the New Orleans area sank (subsidence) or rose (uplift) relative to its 2009 elevation, plotted in millimeters per year. Shades of green depict lands that sank, while areas in purple rose. The maps depict data collected from June 2009 to July 2012 and analyzed by a team from NASA/JPL (Jet Propulsion Laboratory), the UCLA (University of California at Los Angeles), and LSU (Louisiana State University).

- The highest rates of sinking were observed upriver along the Mississippi River around major industrial areas in Norco and Michoud (Figure 12)—with up to 50 mm/year of sinking. The team also observed notable subsidence in New Orleans' Upper and Lower 9th Ward, and in Metairie, where the ground movement could be related to water levels in the Mississippi. At the Bonnet Carré Spillway—the city’s last line of protection against springtime river floods—research showed as much as 40 mm/year of sinking behind the structure and at nearby industrial facilities.

- While the study cites many factors for the regional subsidence, the primary contributors are groundwater pumping and dewatering—surface water pumping to lower the water table, which prevents standing water and soggy ground. “Agencies can use these data to more effectively implement actions to remediate and reverse the effects of subsidence, improving the long-term coastal resiliency and sustainability of New Orleans,” said JPL scientist and lead author Cathleen Jones. “The more recent land elevation change rates from this study will be used to inform flood modeling and response strategies.”

- A key component of the study was data from NASA’s UAVSAR (Uninhabited Aerial Vehicle Synthetic Aperture Radar), which uses a technique known as In SAR (Interferometric Synthetic Aperture Radar). InSAR compares radar images of Earth’s surface acquired over the months and years to map surface deformation with centimeter-scale precision. The technique measures total surface elevation changes from all sources—human and natural, deep seated and shallow. UAVSAR’s spatial resolution makes it ideal for measuring change in New Orleans, where human-caused subsidence can be large and often localized. “We were able to identify single structures or clusters of structures subsiding or deforming relative to the surrounding area,” Jones said.

- In addition to the UAVSAR data, researchers from the Center for GeoInformatics (C4G) at LSU provided up-to-date GPS positioning information for industrial and urban locations in southeast Louisiana. This information helped establish the rate of ground movement at specific points. C4G maintains the most comprehensive network of GPS reference stations in the state, including more than 50 Continuously Operating Reference Stations, or CORS sites, that acquire the horizontal and vertical coordinates at each station every second of every day. CORS data help pin InSAR data down to specific, local points on Earth.

• April 2013: A NASA C-20A piloted aircraft carrying the UAVSAR is wrapping up studies over the U.S. Gulf Coast, Arizona, and Central and South America. The plane left NASA's Dryden Aircraft Operations Facility in Palmdale, CA, on March 7. NASA's Jet Propulsion Laboratory in Pasadena built and manages UAVSAR.

- The campaign is addressing a broad range of science questions, from the dynamics of Earth's crust and glaciers to the carbon cycle and the lives of ancient Peruvian civilizations. Flights are being conducted over Argentina, Bolivia, Chile, Colombia, Costa Rica, El Salvador, Ecuador, Guatemala, Honduras, Nicaragua and Peru. 15)

• October 25, 2012: NASA/JPL researchers have developed a method to use a specialized NASA 3-D imaging radar to characterize the oil in oil spills, such as the 2010 BP Deepwater Horizon spill in the Gulf of Mexico. The research can be used to improve response operations during future marine oil spills. 16)

UAVSAR characterizes an oil spill by detecting variations in the roughness of its surface and, for thick slicks, changes in the electrical conductivity of its surface layer. Just as an airport runway looks smooth compared to surrounding fields, UAVSAR "sees" an oil spill at sea as a smoother (radar-dark) area against the rougher (radar-bright) ocean surface because most of the radar energy that hits the smoother surface is deflected away from the radar antenna. UAVSAR's high sensitivity and other capabilities enabled the team to separate thick and thin oil for the first time using a radar system.

Legend to Figure 14: The oil appears much darker than the surrounding seawater in the greyscale image. This is because the oil smoothes the sea surface and reduces its electrical conductivity, causing less radar energy to bounce back to the UAVSAR antenna. Additional processing of the data by the UAVSAR team produced the two inset color images, which reveal the variability of the oil spill's characteristics, from thicker, concentrated emulsions (shown in reds and yellows) to minimal oil contamination (shown in greens and blues). Dark blues correspond to areas of clear seawater bordering the oil slick.

• October 2, 2012: The modified NASA C-20A (G-III) aircraft, with JPL's UAVSAR has left California for a 10-day campaign to study active volcanoes in Alaska and Japan. 17)

• Summer 2011: Since commencing operational science observations in January 2009, the UAVSAR project has conducted over 150 flights acquiring 1700 flight lines of data in 12 countries. So far, the project delivered over 15 TB of POLSAR (Polarimetric SAR) and RPI (Repeat-Pass Interferometry) data products to the science investigators. 18) 19)

- With the current radar configuration onboard the NASA Gulfstream-III operated by NASA Dryden, the project has been conducting science observations including semi-annual observations of the San Andreas fault, monthly observations of the Sacramento Delta levees, annual observations of the Cascades, Aleutian, Hawaiian volcanoes, and science campaigns to Greenland, Iceland, Central America, and Canada to study the polar ice, volcanoes, earthquakes, tropical and temperate forests, and soil moisture.

- In 2011, the project received funding to add P-band polarimetry capability to UAVSAR to study subcanopy and subsurface soil moisture over a 3-year period. The plan is to replace the L-band active array antenna and frequency up/down-conversion electronics with a P-band passive antenna, high power amplifier, and corresponding frequency up/down-conversion electronics. Flight testing of the P-band configuration is planned for the spring of 2012.

- In addition, the two-year AITT (Airborne Instrument Technology Transition) GLISTIN-A (Airborne Glacier and Land Ice Surface Topography Interferometer) project of NASA is funding the development and integration of the Ka-band VV-polarization single-pass interferometry capability to UAVSAR. This involves the development and mounting of the Ka-band front-end electronics to the backplane of a newly developed Ka-band antenna. Flight testing of the Ka-band is also planned for the spring of 2012. Both existing UAVSAR electronics pods will be able to support any of the 3 radar frequency configurations to provide maximum flexibility to the airborne radar test-bed.

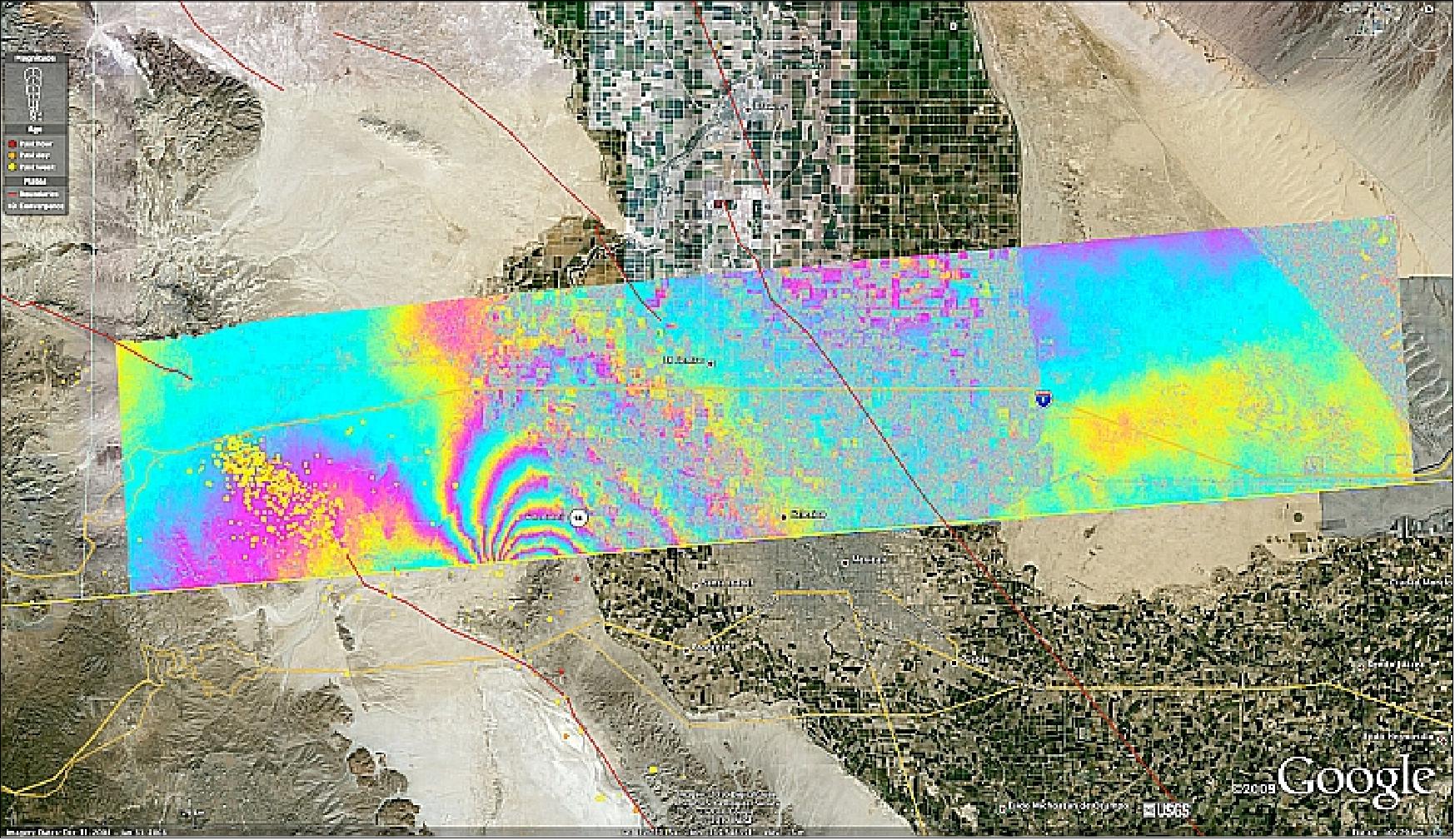

• On April 4, 2010, a major earthquake of magnitude 7.2 rocked Mexico's state of Baja California and parts of the American Southwest. The “El Mayor-Cucapah quake” was centered 52 km south-southeast of Calexico, CA, in northern Baja California. It occurred along a geologically complex segment of the boundary between the North American and Pacific tectonic plates. The quake, the region's largest in nearly 120 years, was also felt in southern California and parts of Nevada and Arizona. It killed two, injured hundreds and caused substantial damage. There have been thousands of aftershocks, extending from near the northern tip of the Gulf of California to a few miles northwest of the U.S. border. 20)

The UAVSAR aircraft provided SAR imagery of the earthquake region from overflights on Oct. 21, 2009 and April 13, 2010. A JPL science team used the data from the two overflights and generated interferograms of the earthquake region. Each UAVSAR flight serves as a baseline for subsequent quake activity. The team estimates displacement for each region, with the goal of determining how strain is partitioned between faults.

• A nearly identical UAVSAR pod will be attached to a Global Hawk UAV platform for uninhabited operational flight tests. An additional pod containing another L-band antenna and GPS/INU unit will also be attached to the Global Hawk platform providing dual L-band data collection capability. 21) 22)

• In response to the Earthquake disaster in Haiti on Jan. 12, 2010, NASA has added a series of science overflights of earthquake faults in Haiti and the Dominican Republic on the island of Hispaniola to a previously scheduled three-week airborne radar campaign to Central America. 23)

Legend to Figure 16: The city is denoted by the yellow arrow; the black arrow points to the fault responsible for the Jan. 12, 2010 earthquake.

• The UAVSAR platform has been flown throughout most of California. Since November 2009, JPL scientists have collected data gathered on a number of Gulfstream III flights over California's San Andreas fault and other major California earthquake faults, a process that will be repeated about every six months for the next several years. From such data, scientists will create 3-D maps for regions of interest (Ref. 23).

• Observation flights on a campaign basis started in August 2009 in temperate and boreal forests. Three sites were located in New Hampshire, Maine and Québec. The UAVSAR sites covered Bartlett, Hubbard Brook, Penobscot, Howland and Mont-Morency experimental forests in addition to covering part of White Mountain National Forest, Laurentides Wildlife Reserve, Jacques-Cartier and Grand-Jardins National Parks. UAVSAR collected data over the 3 sites on 4 different days spread throughout the 11-day campaign. 25)

• In early June 2009, NASA’s UAVSAR team had completed all the objectives of the Arctic Ice Radar Mission in Greenland and flew to Keflavik International Airport (Iceland) to measure the topography and 3D surface velocities of the temperate ice caps of Iceland. Between June 10-14, 2009, NASA’s Gulfstream III science research aircraft flew five repeat data flights over Iceland. 27)

• First engineering test flights on the Gulfstream III aircraft of the UAVSAR instrument started in September 2007 at Dryden. The sensor is undergoing a one-year development and test period to improve robustness and validate its ability to meet the science objectives. 28)

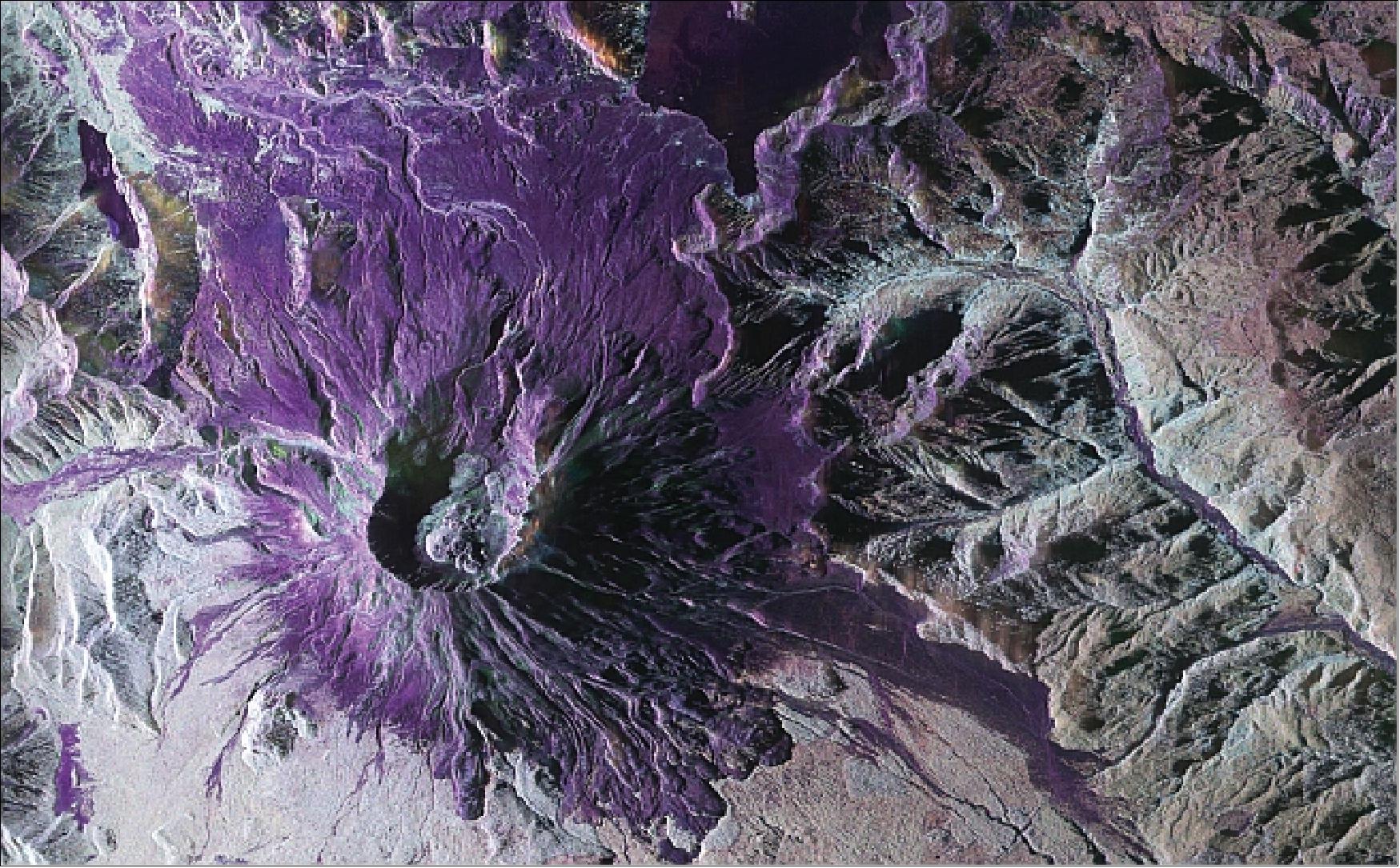

Legend to Figure 18: Within the caldera, the rising dome can be seen to the lower left. Two glaciers that are being pushed together as the dome rises are clearly visible to the upper right within the caldera. Although the UAVSAR data was collected when the peak was covered in snow, much detail is visible in the L-band radar imagery. The volcanic caldera dominates the image, with the dome visible to the lower left within the caldera. Two glaciers within the caldera, to the upper right of the dome, are being pushed together as the dome expands. The edge of Spirit Lake is at the upper center of the image and the tree line is visible in green. - Repeat pass images of Mt. St. Helens will be used to measure the dome deformation and the glacier movement.

UAVSAR instrument:

The design features an AESA (Active Electronically Scanned Array) antenna which is electronically steered along-track to assure that the antenna beam can be directed independently, regardless of speed and wind direction. Other features supported by the antenna include elevation monopulse and pulse-to-pulse re-steering capabilities that will enable some novel modes of operation. 29)

From an implementation point of view, the objectives of the UAVSAR project are to:

- Develop a miniaturized polarimetric L-band SAR for use on an UAV

- Develop the associated processing algorithms for repeat-pass differential interferometric measurements

- Conduct measurements of geophysical interest, particularly changes of rapidly deforming surfaces such as volcanoes or earthquakes.

Center frequency | 1.2575 GHz, (L-band), corresponding to a wavelength of 23.79 cm |

Chirp bandwidth | 80 MHz |

Intrinsic resolution | 1.8 m slant range, 0.8 m azimuth |

Polarization | Full quad-polarization |

Swath width at nominal altitude of 13800 m | 16 km |

Look angle range | 25º - 65º |

Raw ADC bit quantization | 12 bit (baseline) |

Waveform | Nominal chirp/arbitrary waveform |

Antenna size | 0.5 m (range) x 1.6 m (azimuth) |

Azimuth steering capability | > ±20º |

Instrument power | 3.1 kW |

Polarization isolation | <- 20 dB |

NESZ (Noise Equivalent Sigma Zero) | < - 50 dB |

Operating altitude range | 2000 - 18000 m |

Ground speed range | 100 - 250 m/s |

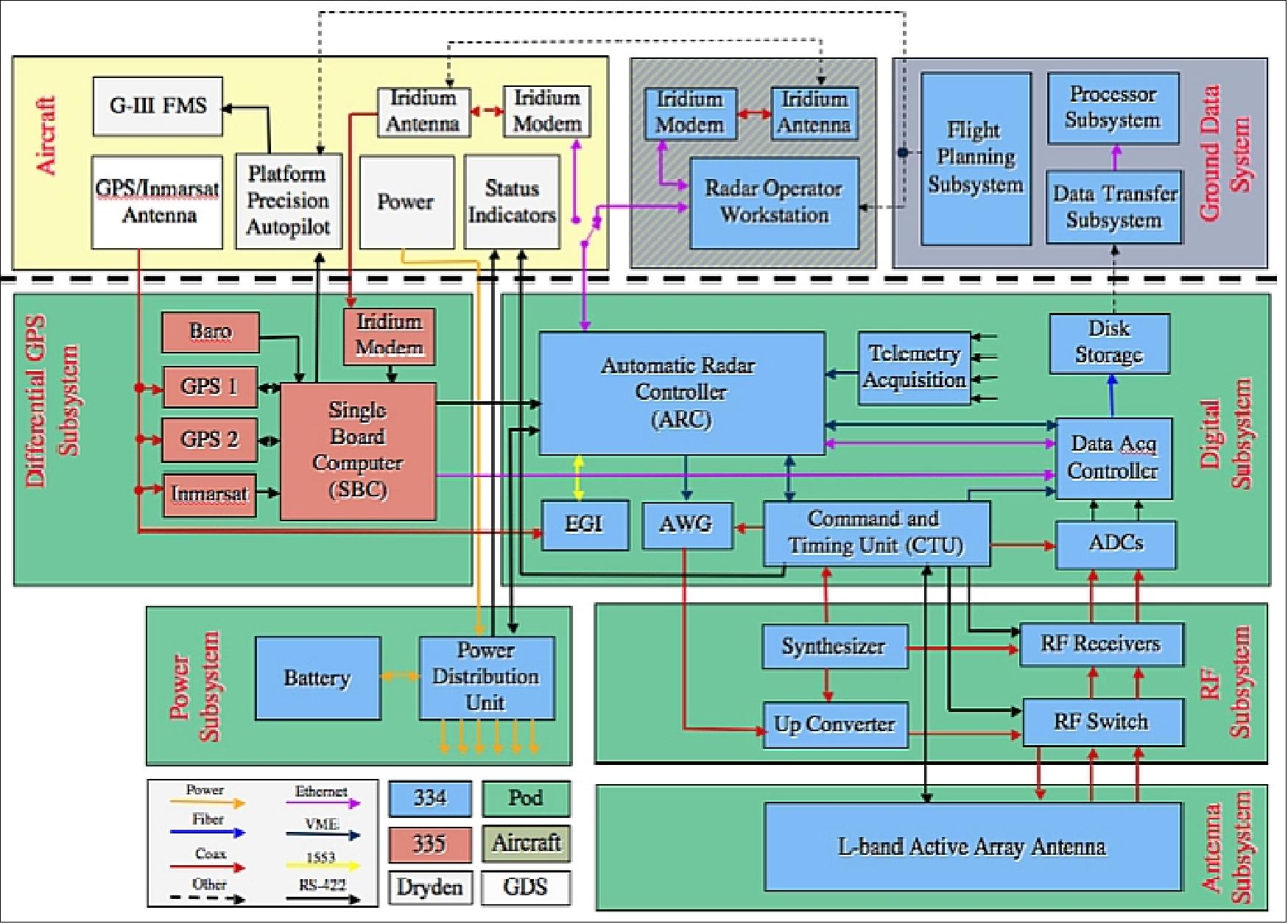

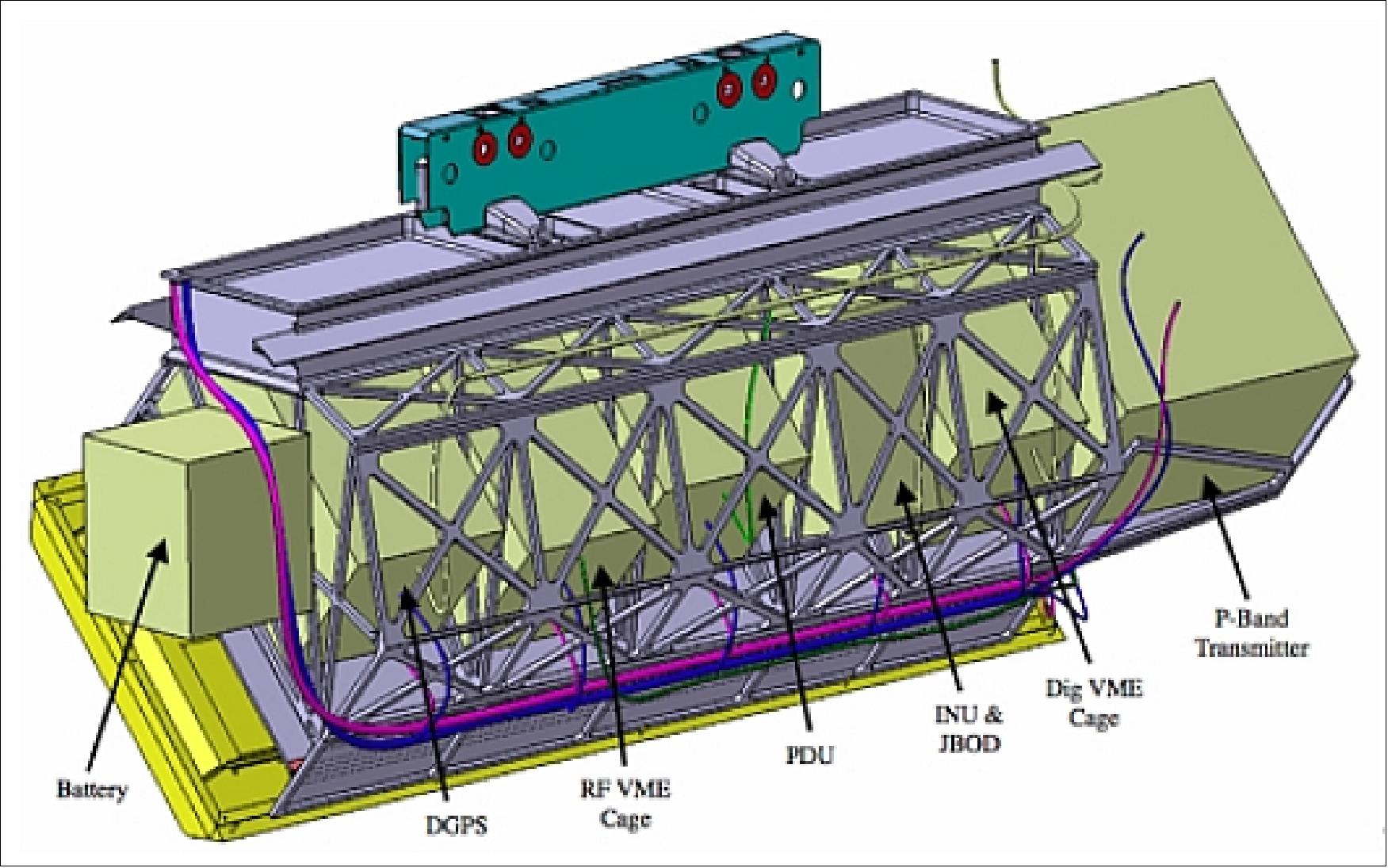

Figures 20 and 21 provide an overview of the UAVSAR system elements and its main interfaces. The radar has been designed to minimize the number of interfaces with the aircraft for improved portability. The aircraft provides 28 V DC power to the radar via the PDU (Power Distribution Unit), which is also responsible for maintaining the thermal environment in the pod, and the radar provides its real-time DGPS position data to the aircraft for use by the Precision Autopilot. Waypoints for the desired flight paths are generated prior to flight by the G-III FMS (Flight Management Subsystem) and loaded into the PPA (Platform Precision Autopilot) as well as into the radar's ARC (Automatic Radar Controller) along with radar command information for each waypoint.

The ARC (Automatic Radar Controller) is the main control computer for the radar and controls all major functions of the radar during flight. It is designed to operate in a fully autonomous mode or to accept commands from the ROW (Radar Operator Workstation) either through an ethernet connection on crewed platforms or through an Iridium modem for unpiloted platforms.

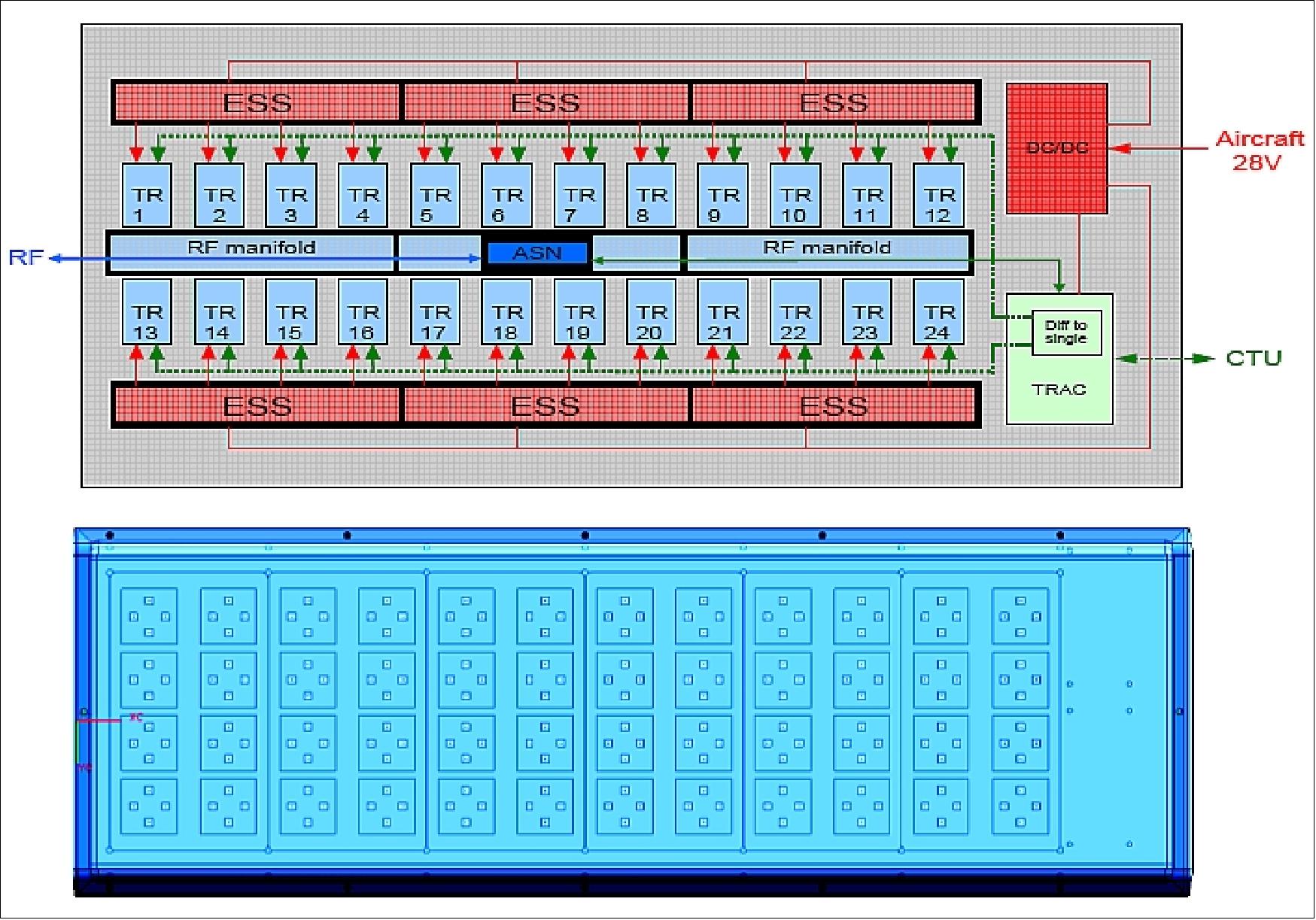

The CTU (Control and Timing Unit) controls the timing of all the transmit and receive events in the radar timeline and thus interacts with many of the radar digital and radio frequency (RF) electronics. The active array antenna consists of 24 130 W L-band Transmit/Receive (TR) modules that feed 48 radiating elements within the 0.5 m by 1.5 m array. Figure 22 illustrates how the various electronic subsystems of UAVSAR are arranged within the pod.

The UAVSAR system requires just under 2 kW of DC power when the radar is transmitting L-band polarimetric RTI (Repeat Track Interferometric) SAR measurements. This is well within the capacity of the Gulfstream III aircraft and many other platforms considered for hosting the UAVSAR radar. The standby DC power is on the order of 150 W. The active array antenna has a mass of < 50 kg; the mass of each T/R module is ~ 0.5 kg. The remainder of the radar electronics in the payload bay has a mass of < 100 kg (approximately 20 kg for the RFES (Radio Frequency Electronic Subsystem), 30 kg for the DES (Digital Electronic Subsystem), and 30 kg for cabling, power distribution, etc.). The combined instrument mass is < 230 kg.

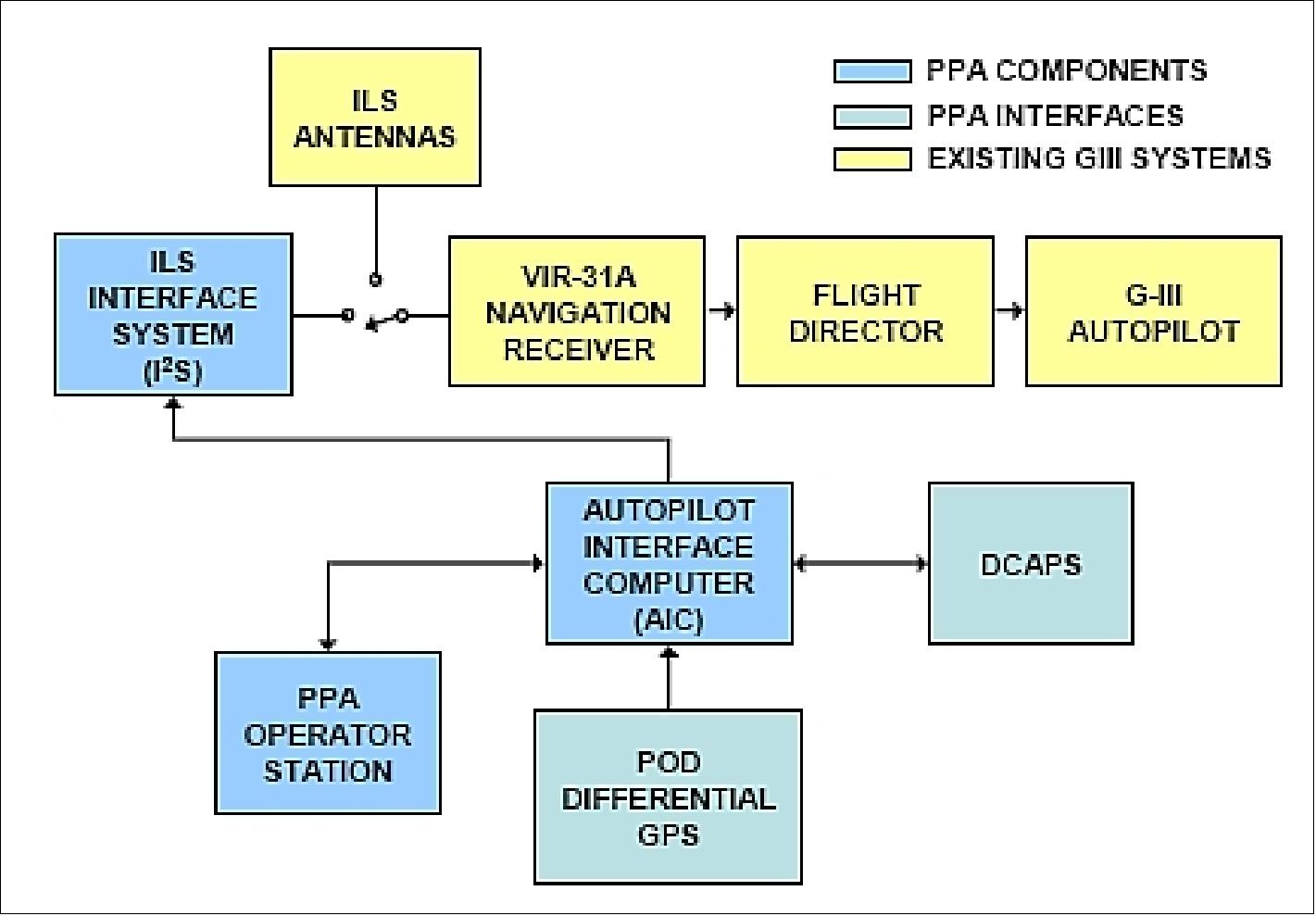

PPA (Platform Precision Autopilot):

The PPA hardware components and interfaces are outlined in Figure 23. The main element is the AIC (Autopilot Interface Computer), a Phytec MPC565 single board computer operating at 56 MHz.

The PPA software is hosted on AIC providing interfaces to three external data sources, the differential GPS (dGPS), the DCAPS (Data Collection and Processing System), and the Operator Station. The AIC also outputs control signals to the ILS (Instrument Landing System), I2S (Interface System), which is in turn connected to the navigation receiver. The differential GPS receiver (dGPS) , developed at JPL, provides ECEF (Earth Centered Earth Fixed) positions in meters. It achieves high accuracy using two sources of GPS correction (Inmarsat and Iridium) and two differential GPS units. The dGPS position accuracy is estimated at 10 cm horizontally and 20 cm vertically (1σ) at 1 Hz.

For an observation flight, an experimenter may only select waypoints and the desired flight altitude - all other functions of platform control and navigation are being provided by the PPA.

DES (Digital Electronic Subsystem):

DES provides the overall timing and control signals for the radar as well as the telemetry and data acquisition functions. The ARC is providing coordination and control of all the radar subsystems. ARC can be operated in in two primary modes, manual mode and automatic mode. In automatic mode the ARC tracks the aircraft trajectory using data from the INU (Inertial Navigation Unit) and initiates power state transitions, data take start and end and other housekeeping functions based on proximity to predefined waypoints uploaded from the ROW prior to flight. The ARC flight software uses VxWorks as its operating system.

In addition to commanding the radar timing unit during science data collection, the ARC flight software handles the embedded GPS/INU telemetry collection, tracks the aircraft flight path, performs electronic beam steering calculations, updates attenuation parameters and monitors temperatures inside the pod.

The UAVSAR radar can operate in a number of complex modes including multi-polarization, multi-frequency and multi-antenna modes of operation.

Antenna subsystem:

The antenna is designed to radiate orthogonal linear polarizations for fully-polarimetric measurements. Beam-pointing requirements for repeat-pass SAR interferometry necessitate electronic scanning in azimuth over a range of ±20º to compensate for aircraft yaw. Beam-steering is accomplished by transmit / receive (T/R) modules and a beamforming network implemented in a stripline circuit board (Ref. 5).

The antenna aperture comprises 48 patch antenna elements arranged as an array of 4 elements in elevation by 12 elements in azimuth. The elevation spacing of the elements is 10 cm and the azimuth spacing is 12.5 cm. The corresponding aperture size is 0.4 m x 1.5 m, but the antenna groundplane is larger (0.6 m x 1.75 m) to accommodate the various antenna electronics subassemblies, and also to facilitate operation with existing P-band equipment (Ref. 5).

The antenna elements are single-layer microstrip patches (~ 8 cm x 8 cm in size) on a low-permittivity dielectric, fabricated from fiberglass honeycomb. The patch elements are fed with two probes for each polarization to provide the required bandwidth over scan. The elements are capable of radiating both horizontal (H) and vertical (V) polarization (Ref. 5).

There are 24 T/R modules feeding elements pair-wise in elevation. This architecture facilitates beam scanning in azimuth also enables short-baseline cross-track interferometry between the antenna upper and lower halves, while using the minimum number of T/R modules. A bank of four T/R modules is fed from a single ESS (Energy Storage Subsystem) - essentially a custom DC/DC converter that provides 32 V DC power at up to 47 A pulsed. The T/R modules are configured to transmit either an H-pulse or a V-pulse, and to receive both an H-pulse and V-pulse simultaneously. The peak power of the T/R modules is 100 W, and the maximum duty cycle is 5%. The average and peak RF powers radiated by the antenna are 93 W and 1.86 kW, respectively (assuming losses of 1.1dB). The T/R modules are cooled by means of an air duct that runs along the length of the antenna. The air velocity in the duct is controlled to maintain a relatively low thermal gradient across the T/R modules (Ref. 5).

Beamforming is implemented by a combination of phase shifters and attenuators in the T/R modules, and by means of a network of printed circuit manifolds. The T/R module vendor, Remec Defense and Space Inc., is designing custom L-band MMICs to implement the phase shifter and LNA functions of the T/R module. There are four RF manifolds: one for transmit, two for receive (one for H and one for V), and one for calibration. Separate manifolds are provided for the upper and lower halves of the antenna to facilitate short baseline cross-track interferometry. The manifolds consist of two 12-way corporate dividers fabricated as stripline transmission lines in multi-layer printed circuit boards that are located between the two rows of T/R modules. The common ports of the 12-ways are connected to a switching network that routes receive, transmit, and calibrations signals to and from the RF electronics, as required by the radar operating mode (Ref. 5).

Figure 24 is a block diagram of the phased antenna array which was designed, built and integrated at JPL. The figure shows the top side and bottom side of the antenna and depicts the electronics assemblies and radiating aperture, respectively. An aluminum honeycomb panel forms the mechanical backbone of the antenna (Ref. 6).

The T/R modules and antenna switch network are controlled through a digital interface called the T/R antenna controller (TRAC). The TRAC receives command and timing information from flight software and a hardware interface called the central timing unit (CTU), and in turn feeds LVDS serial data to the T/R modules through twisted-pair cables (Ref. 6).

Electrical Parameters | |

Operating frequency range | 1.215 - 1.3 GHz |

Duty cycle | 0 - 5% |

PRF (Pulse Repetition Frequency) | 0 - 4000 Hz |

Average DC power (5% duty cycle) | ≤ 26W |

Transmitter channels | 1 |

Transmit drive level | 10dBm |

Transmit output power (50 ohm load) | ≥ 48+NF dBm |

Transmitter output power flatness | ≤ ±0.5 dB |

Transmitter output power variability | ≤ 0.5 dB rms |

Transmit pulse output power drop | ≤ 1dB |

Transmit pulse length | 5-50 µs |

Transmit pulse-to-pulse phase variation | ≤ 2º rms |

Transmit output harmonics | -30 dBc |

Transmit spurious signals | ≤ -50 dBc |

Transmit phase noise (10kHz - 80MHz) | 100 dBc/Hz |

Non-transmit polarization suppression | ≥ 40 dB |

Receiver channels | 2 |

Receiver gain | 25 - 30 dB |

Receiver gain flatness | ≤ ± 0.5 dB |

Receiver input 1 dB compression | ≥ -30 dBm |

Receiver gain H-V variation | ≤ 0.5dB |

Receiver phase H-V variation | ≤ 5º |

Receiver protector isolation | ≥ 30dB |

Receiver H-V isolation | ≥ 40 dB |

Attenuation range | 0 to ≥ 14 dB |

Attenuation precision | 0.5 dB |

Attenuation accuracy | ≤ 10% |

Phase shifter resolution | ≥ 6 bits |

Phase linearity | ≤ ± 10º |

RF port return loss | ≥ 14dB |

Mid-band gain variation (nominal) | ≤ 0.05 dB/ºC |

Mid-band phase variation (nominal) | ≤ 0.5 deg/ºC |

Phase stability (30 s) at constant temperature | 2 rms |

Settling time (Tx to Rx and Rx to Tx) | ≤ 5 s |

Mechanical Parameters | |

Dimensions (inclusive connectors and tabs) | ≤ 16 cm x 12 cm x 3 cm |

Mass | ≤ 680 g |

Antenna port RF interface | SMA (SubMiniature version A) connectors |

Non-antenna port RF interface | GPO (General Post Office)-type connectors |

Environmental Parameters | |

Operating temperature range | ± 40ºC |

Non-operating temperature | - 60ºC to + 70ºC |

Operating altitude | 0 - 18,000 m |

Operating humidity range | 0-100% |

Mean time between failures | ≥ 50,000 hrs |

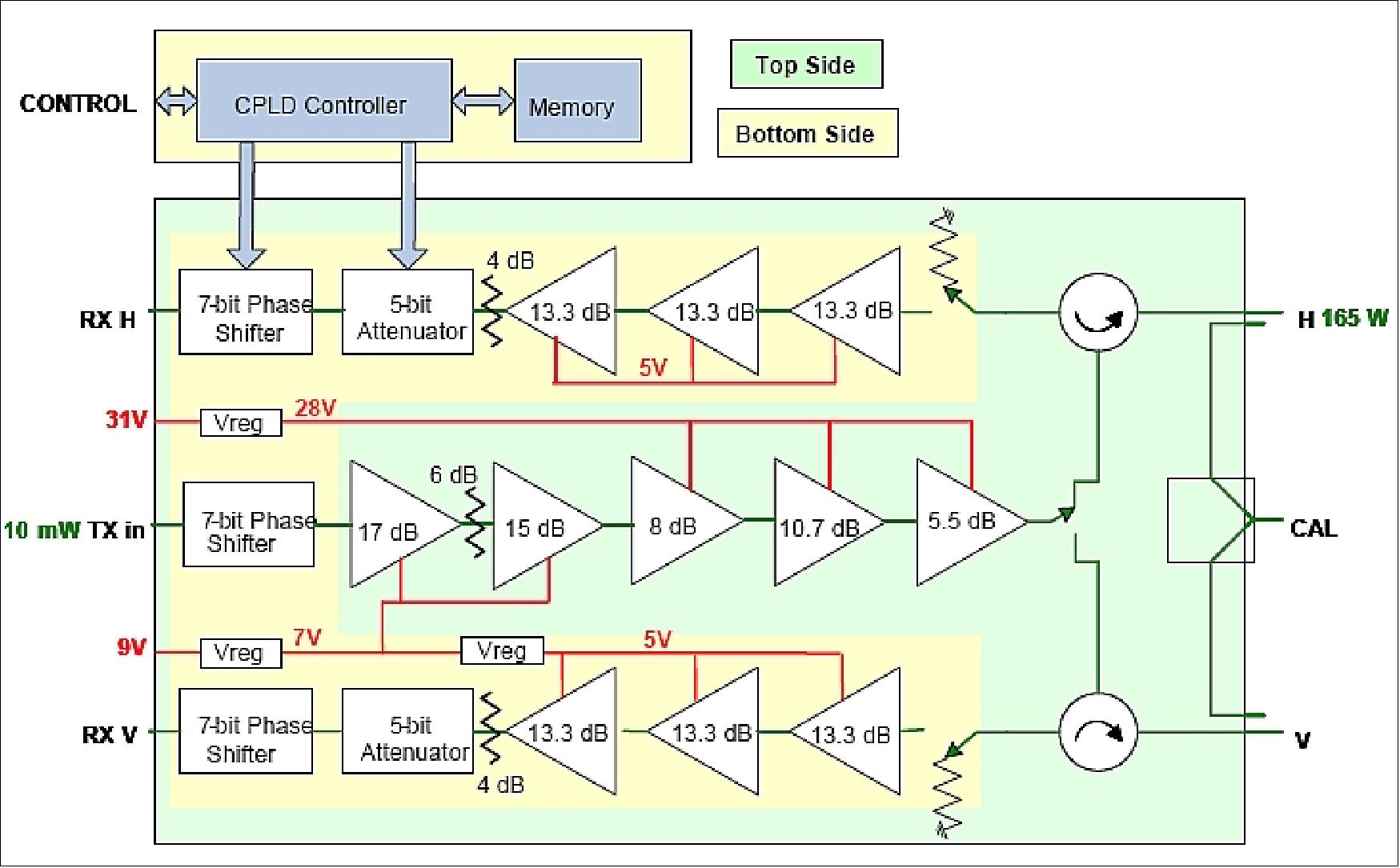

The T/R module block diagram is shown in Fig. 25. The module comprises two sides. The top side is populated with the power amplifiers of the transmit chain, polarization switch, calibration coupler, and circulators. The bottom side has receiver sub-assemblies (comprising LNA, attenuator and phase shifter), the transmit phase shifter, CPLD controller, memory, drivers, receive protection switches, and voltage regulators (Ref. 6).

Calibration: In order to form a beam that is scanned to the proper direction, it is necessary to calibrate the T/R modules and associated beamforming network. This calibration procedure is essentially a process of equalizing the phase and amplitude of each RF path to and from a particular antenna port. In transmit mode, compressed operation of the amplifiers prevents amplitude trimming with attenuators, so modules are positioned in the array to as to form a power taper that is symmetric about the azimuth and elevation directions. In receive mode, amplitude is trimmed to within 0.5 dB using a 5-bit digital attenuator (Ref. 6).

Considerable simplification of the calibration process is obtained as a result of the accuracy, linearity, isolation, and predictable temperature dependence of the beamforming networks. However, even with these simplifying attributes, calibration of this 24-element active array (as currently configured) is both labor-intensive and time-consuming (Ref. 6).

Planned UAVSAR onboard processing capabilities:

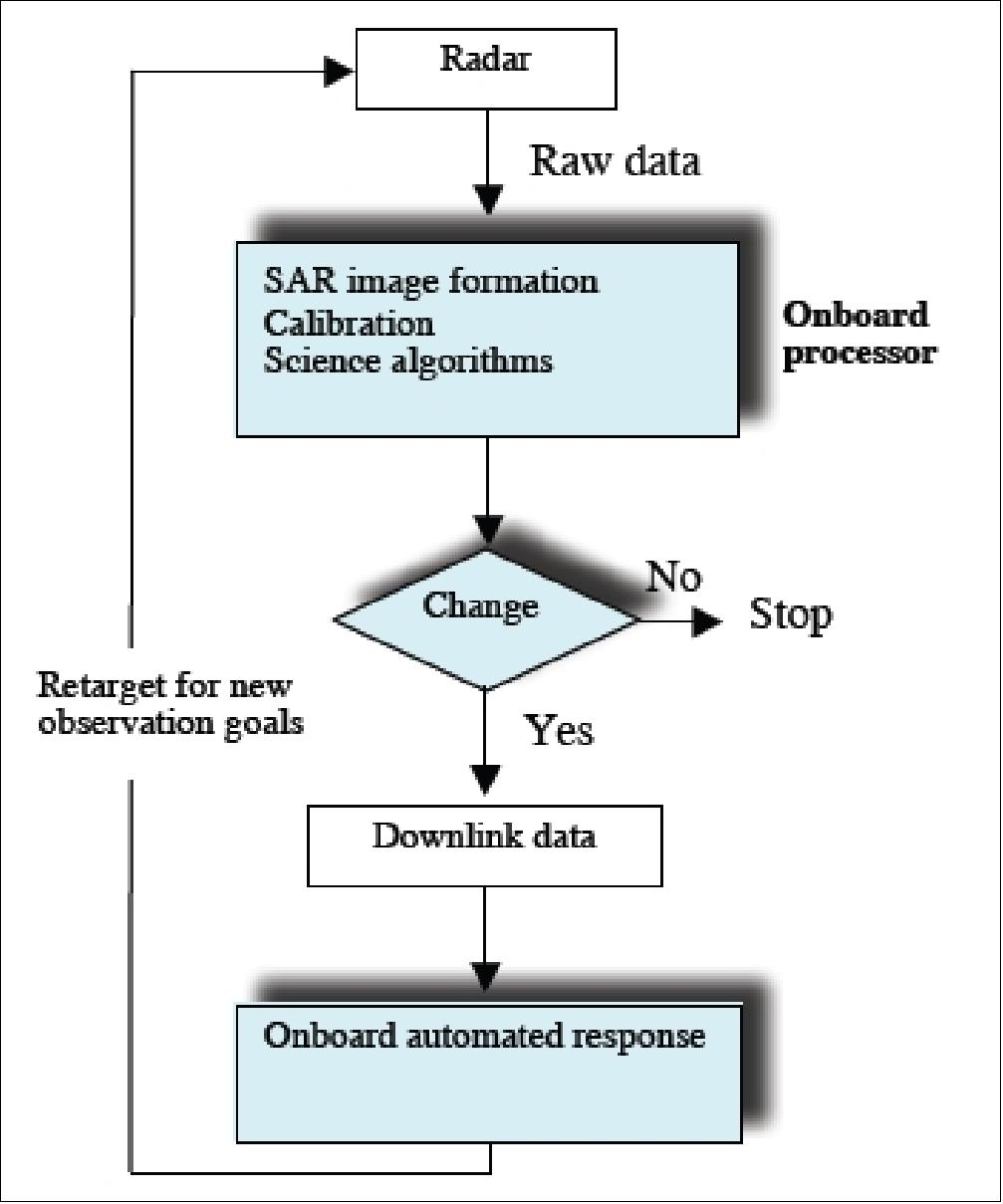

A future extension of the UAVSAR system calls for an autonomous disturbance detection and monitoring system with imaging radar that combines the unique capabilities of the imaging radar with high throughput onboard processing technology and onboard automated response capability based on specific science algorithms. This smart sensor development leverages off recently developed technologies in real-time onboard synthetic aperture radar (SAR) processor and onboard automated response software as well as science algorithms previously developed for radar remote sensing applications. 30)

The challenges are the radar's high raw data rate, requiring large onboard data storage and high downlink capability, and low data latency, requiring delivery of perishable information in time to be of use. Recent onboard SAR processor development (for the DoD Space Based Radar program) is the first step towards reducing the downlink data rate. High fidelity polarimetric and interferometric SAR (InSAR) processing technology will reduce the downlink data rate by hundreds of orders of magnitude. In particular, the onboard processing capability will contribute to several radar-based mission concepts for monitoring natural hazards and the global carbon cycle. Forest fire and hurricane-induced damages on coastal landscapes and forests are considered the two most important disturbances of natural ecosystems and threats to human habitats.

The change detection concept under development is based on the NASA's AIST-02 (Advanced Information Systems Technology) project to provide innovative on-orbit and ground capabilities for the communication, processing, and management of remotely sensed data and the efficient generation of data products and knowledge. The ASE (Autonomous Sciencecraft Experiment) implementation on the EO-1 (Earth Observing-1) mission, operational as of 2008 and providing automated disturbance detection and a monitoring capability for forest fire and hurricane-induced damages applications, serves as a conceptual starter for the smart sensor implementation on UAVSAR.

In the UAVSAR smart sensor concept (Figure 26), raw data from the radar observation are routed to the onboard processor via a high-speed serial interface. The onboard processor will perform SAR image formation in real time on two raw data streams, which could be data of two different polarization combinations or data from two different interferometric channels. The onboard processor will generate real-time high resolution imagery for both channels. The onboard processor will also execute calibration routines and science algorithms appropriate for the specific radar application.

Autonomous detection is performed by an intelligent software routine designed to detect specific disturbances based on the results of science processing. If no change is detected, the process stops and the results are logged. If “change” due to specific disturbances is detected, the onboard automated response software will plan new observations to continue monitoring the progression of the disturbance. The new observation plan is routed to the spacecraft or aircraft computer to re-target the platform for new radar observations.

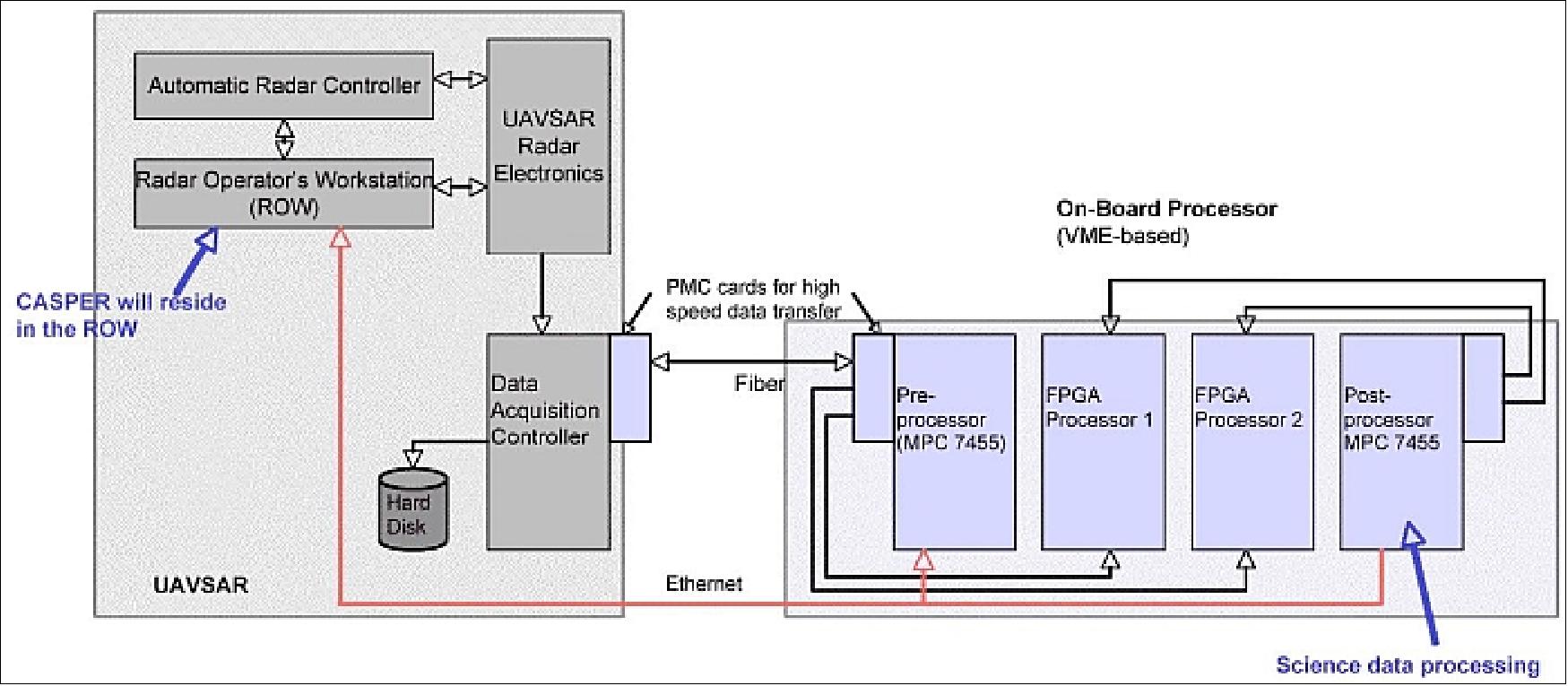

The hardware for the prototype autonomous system is a self-contained VME chassis with single board computers and FPGA (Field Programmable Gate Array) processor boards, high-speed serial interfaces for data routing, and Ethernet connection for processor control (Figure 27). The VME (Virtual Machine Environment)-based change detection on-board processor (CDOP) consists of two custom FPGA boards with two Xilinx Virtex II-Pro FPGAs each and large high-speed SRAM (Static Random Access Memory) to perform real-time SAR image formation, a custom fiber-channel-to-RocketIO interface card to handle the data transfer rate in excess of 1 Gbit/s between the UAVSAR, processor components, and onboard memory.

Two identical FPGA boards are utilized in order to perform SAR image formation of two raw data channels concurrently. COTS G4 PowerPC cards are used for preprocessing and polarimetric or interferometric postprocessing. The processor control software consists of realtime, multi-threaded code that is running on the G4 CPU to route data from UAVSAR's data acquisition controller to the two FPGA processors and to generate processor parameters for two processor channels respectively.

The G4 PowerPC post-processor will perform the science data processing task, whereas autonomous detection and monitoring capability, as well as the CASPER (Continuous Activity Scheduling Planning Execution and Replanning) software, will reside either on UAVSAR's Radar Operator's Workstation (ROW) or a separate laptop computer.

The UAVSAR onboard processor data flow is shown in Figure 28. Live and/or archived raw data are first unpacked and reformatted before being routed to the FPGA processor. The preprocessor generates the phase correction factors for motion compensation and processing parameters for SAR image formation from the ephemeris data. In the FPGA processor, range compression focuses the image in the cross track direction. The presum module resamples the pulses to a user-specified along track location and spacing to reduce the number of pulses to process in the along track (azimuth) direction while reducing the noise on each radar pulse.

Motion compensation is the process where the radar signal data are resampled from the actual path of the antenna to an idealized path called the reference path. This process is necessary to align the phase centers of two data channels to the same reference path to ensure maximum correlation. Azimuth processing focuses the image in the along track direction. Radiometric and phase calibrations are necessary to generate polarimetric or interferometric science data products.

The post-processor geolocates the two SAR images with information from the ephemeris data and generates application-specific science data products such as the biomass and fuel load map. The science data products are routed via Ethernet to a laptop or UAVSAR's ROW for disturbance detection and monitoring.

The UAVSAR-based automated disturbance detection and monitoring system is expected to be operational by 2010.

1) S. Hensley, K. Wheeler, G. Sadowy, C. Jones, S. Shaffer, H. Zebker, T. Miller, B. Heavey, E. Chuang, R. Chao, K. Vines, K. Nishimoto, J. Prater, B. Carrico, N. Chamberlain, J. Shimada, M. Simard, B. Chapman, R. Muellerschoen, C. Le, T. Michel, G. Hamilton, D. Robison, G. Neumann, R. Meyer, P. Smith, J. Granger, P. Rosen, D. Flower, R. Smith, “The UAVSAR Instrument: Description and Test Plans,” NSTC2007 (NASA Science and Technology Conference 2007), College Park, MD, USA, June 19-21, 2007, URL: http://esto.nasa.gov/conferences/nstc2007/papers/Hensley_Scott_B4P1_NSTC-07-0036.pdf

2) P. A. Rosen, S. Hensley, K.. Wheeler, G. Sadowy, T. Miller, S. Shaffer, R.. Muellerschoen, C. Jones, H.. Zebker, S. Madsen, “UAVSAR: A New NASA Airborne SAR System for Science and Technology Research,” 2006 IEEE Conference on Radar, April 24-27, 2006, Verona, NY, USA, URL: http://trs-new.jpl.nasa.gov

/dspace/bitstream/2014/40223/1/06-0357.pdf

3) S. N. Madsen, S. Hensley, K. Wheeler, G. A. Sadowy, T. Miller, R. Muellerschoen, Y. Lou, P. A. Rosen, “UAV-based L-band SAR with precision flight path control,” Proceedings of SPIE, 'Enabling Sensor and Platform Technologies for Spaceborne Remote Sensing,' George J. Komar, Jinxue Wang, Toshiyoshi Kimura, Editors, Vol. 5659, January 2005, pp. 51-60

4) J. Lee, B. Strovers, V. Lin, “C-20A/GIII Precision Autopilot Development In Support of NASA's UAVSAR Program,” NSTC2007, College Park, MD, USA, June 19-21, 2007, URL: http://esto.nasa.gov/conferences/nstc2007/papers/Lee_James_B4P3_NSTC-07-0013.pdf

5) N. Chamberlain, M. Zawadzki, G. Sadowy, E. Oakes, K. Brown, R. Hodges, “The UAVSAR Phased Array Aperture,” Proceedings of the 2006 IEEE/AIAA Aerospace Conference, Big Sky, MT, USA, March 4-11, 2006, URL: http://trs-new.jpl.nasa.gov/dspace/bitstream/2014/38533/1/05-3868.pdf

6) N. Chamberlain, G. Sadowy, “The UAVSAR Transmit / Receive Module,” Proceedings of the 2008 IEEE Aerospace Conference, Big Sky, MT, USA, March 1-8, 2008

7) ”NASA-NOAA Tech Will Aid Marine Oil Spill Response,” NASA/JPL, 13 December 2021, URL: https://www.jpl.nasa.gov/news/nasa-noaa-tech-will-aid-marine-oil-spill-response?

utm_source=iContact&utm_medium=email&utm_campaign=nasajpl&utm_content=earth20211213-1

8) ”A Mosaic of Fire Data,” NASA Earth Observatory, Image of the Day for 6 February 2021, URL: https://earthobservatory.nasa.gov/images/147872/a-mosaic-of-fire-data

9) ”NASA Takes Flight to Study California's Wildfire Burn Areas,” NASA/JPL, 15 September 2020, URL: https://www.jpl.nasa.gov/news/news.php?release=2020-176

10) ”Mapping a Slow-Motion Landslide,” NASA Earth Observatory, 5 June 2020, URL: https://earthobservatory.nasa.gov/images/146808/mapping-a-slow-motion-landslide

11) Xie Hu, Roland Bürgmann, William H. Schulz & Eric J. Fielding, ”Four-dimensional surface motions of the Slumgullion landslide and quantification of hydrometeorological forcing,” Nature Communications, Volume 11, Article number: 2792, https://doi.org/10.1038/s41467-020-16617-7, Published: 03 June 2020, URL: https://www.nature.com/articles/s41467-020-16617-7.pdf

12) Alan Buis, Carol Rasmussen,”NASA Studies a Rarity: Growing Louisiana Deltas,” NASA/JPL, Feb. 8, 2017, URL: http://www.jpl.nasa.gov/news/news.php?release=2017-026

13) ”Scientists Improve Maps of Subsidence in New Orleans,” NASA Earth Observatory, May 25, 2016, URL: http://earthobservatory.nasa.gov/IOTD/view.php?id=88078

14) Cathleen E. Jones, Karen An, Ronald G. Blom, Joshua D. Kent, Erik R. Ivins, David Bekaert, ”Anthropogenic and geologic influences on subsidence in the vicinity of New Orleans, Louisiana,” Journal of Geophysical Research, May 16, 2016, DOI: 10.1002/2015JB012636, URL: http://onlinelibrary.wiley.com/doi/10.1002/2015JB012636/full

15) Steve Cole, Alan Buis, “NASA Flies Radar South on Wide-Ranging Scientific Expedition,” NASA, April 3, 2013, URL: http://www.nasa.gov/home/hqnews/2013/apr/HQ_13-097_Science_Radar_Flights.html

16) Alan Buis, “NASA Radar Penetrates Thick, Thin of Gulf Oil Spill,” NASA/JPL, October 25, 2012, URL: http://www.jpl.nasa.gov/news/news.php?release=2012-337

17) Alan Buis, Beth Hagenauer, “NASA Radar to Study Volcanoes in Alaska, Japan,” NASA/JPL, Oct. 2, 2012, URL: http://www.jpl.nasa.gov/news/news.php?release=2012-308

18) Yunling Lou, Scott Hensley, Roger Chao, Cathleen Jones, Tim Miller, Ron Muellerschoen, Yang Zheng, “UAVSAR Instrument: Current Operations and Planned Upgrades,” Proceedings of IGARSS (International Geoscience and Remote Sensing Symposium), Vancouver, Canada, July 24-29, 2011

19) Yunling Lou, Scott Hensley, Roger Chao, Elaine Chapin, Brandon Heavy, Cathleen Jones, Timothy Miller, Chris Naftel, David Fratello, “UAVSAR Instrument: Current Operations and Planned Upgrades,” ESTF 2011 (Earth Science Technology Forum 2011), Pasadena, CA, USA, June 21-23, 2011, URL: http://esto.nasa.gov/conferences/estf2011/papers/Lou_ESTF2011_UAVSAR.pdf

20) “NASA Radar Images Show How Mexico Quake Deformed Earth,” NASA, June 22, 2010, URL: http://www.nasa.gov/topics/earth/features/UAVSAR20100623.html

21) Kenneth Vines, Roger Chao, “Autonomous Deployment of the UAVSAR Radar Instrument,” Proceedings of the 2010 IEEE Aerospace Conference, Big Sky, MT, USA, March 6-13, 2010

22) Yunling Lou, Scott Hensley, Roger Chao, Elaine Chapin, Brandon Heavy, Cathleen Jones, Timothy Miller, Chris Naftel, David Fratello, “UAVSAR Instrument: Current Operations and Planned Upgrades,” ESTF 2011 (Earth Science Technology Forum 2011), Pasadena, CA, USA, June 21-23, 2011, URL: http://esto.nasa.gov/conferences/estf2011/papers/Lou_ESTF2011_UAVSAR.pdf

23) “NASA airborne radar to study quake faults in Haiti, Dominican Republic,” Jan. 27, 2010, URL: http://www.preventionweb.net/english/professional/news/v.php?id=12481

24) “JPL Airborne Radar Captures Its First Image of Post-Quake Haiti,” NASA/JPL, Feb. 1, 2010, URL: http://www.jpl.nasa.gov/news/news.php?release=2010-037

25) M. Simard, N. Pinto, R. Dubayah, S. Hensley, “UAVSAR's first campaign over temperate and boreal forests,” American Geophysical Union, Fall Meeting 2009, San Francisco, CA

26) http://photojournal.jpl.nasa.gov/catalog/PIA12189

27) “NASA Completes Icelandic Portion of Arctic Ice Radar Mission,” June 25, 2009, URL: http://www.nasa.gov/centers/dryden/Features/G-III_uavsar_09.html

28) Cathleen Jones, Scott Hensley, Kevin Wheeler, Greg Sadowy, Scott Shaffer, Howard Zebker, Tim Miller, Brandon Heavey, Ernie Chuang, Roger Chao, Ken Vines, Kouji Nishimoto, Jack Prater, Bruce Carrico, Neil Chamberlain, Joanne Shimada, Marc Simard, Bruce Chapman, Ron Muellerschoen, Charles Le, Thierry Michel, Gary Hamilton, David Robison, Greg Neumann, Robert Meyer, Phil Smith, Jim Granger, Paul Rosen, Dennis Flower, Robert Smith, “Some First Results from the UAVSAR Instrument,” ESTC2008 (Earth Science Technology Conference 2008), June 24-26, 2008, College Park, MD, USA, URL: http://esto.nasa.gov/conferences/estc2008/papers/Jones_Cathleen_B5P3.pdf

29) http://uavsar.jpl.nasa.gov/

30) Y. Lou, S. Chien, R. Muellerschoen, S. Saatchi, “Autonomous Disturbance Detection and Monitoring System with UAVSAR,” NSTC2007 (NASA Science and Technology Conference 2007), College Park, MD, USA, June 19-21, 2007, URL: http://esto.nasa.gov/conferences/nstc2007/papers/Lou_Yunling_B4P2_NSTC-07-0095.pdf

The information compiled and edited in this article was provided by Herbert J. Kramer from his documentation of: ”Observation of the Earth and Its Environment: Survey of Missions and Sensors” (Springer Verlag) as well as many other sources after the publication of the 4th edition in 2002. - Comments and corrections to this article are always welcome for further updates (eoportal@symbios.space).